AI Salaries Are Climbing Fast: Here’s What the Job Market Data Actually Says

Most salary reports you find online are already a year old by the time they hit your inbox. They are built on surveys, self-reported numbers, and sample sizes that would make any analyst nervous. Meanwhile, the AI talent market has been moving fast enough that compensation benchmarks from 18 months ago can be off by $30,000 or more for the same role.

This report is different. The salary benchmarks here are pulled from live job postings, over 100 million of them, tracked in real time through JobsPikr’s data pipeline. That means what you are reading reflects what companies are actively offering right now, not what a few hundred HR managers remembered typing into a survey last spring.

Here is what this report covers: how AI engineer salaries have shifted from 2023 to 2025, what the gap looks like across cities and countries, where the biggest pay variances exist for the same role, and what all of this means for your compensation strategy heading into 2026. Whether you are benchmarking offers, planning headcount, or trying to stay competitive in a tight AI talent market, the numbers in here give you a real starting point, not a recycled one.

Why Most AI Salary Benchmarks Are Already Out of Date

Here is a situation most comp teams have been in at some point. You are putting together an offer for an AI engineer, you pull up the salary benchmark report your team uses, and the methodology footnote quietly tells you the data was collected sometime in the middle of last year. By the time that report was commissioned, written, reviewed, published, and downloaded, the AI talent market had already moved.

This is the core problem with how most organizations do salary benchmarking for AI roles. The dominant sources: annual surveys, self-reported platforms, and third-party compensation studies, have a structural lag built into them. They capture a moment in time, not a living market. And in AI hiring, a 12 to 18-month lag is not a minor inconvenience. It is the difference between making competitive offers and losing candidates in the final round without understanding why.

The numbers back this up. AI job postings skyrocketed 61% globally in 2024 compared to roughly 1.4% for all jobs overall. When demand moves that fast, last year’s salary benchmarks become a liability. PwC’s 2025 Global AI Jobs Barometer, which analyzed close to 1 billion job ads from six continents, found that AI-skilled workers earned an average 56% wage premium in 2024, up dramatically from the 25% premium recorded in the prior year’s study. That is not gradual drift. That is the kind of shift that breaks comp bands that were carefully built just twelve months earlier.

What makes this harder is that “AI talent” is not one clean category anymore. The role definitions themselves are fragmenting. An AI engineer at a fintech company and an AI engineer at a healthcare startup may share a job title but have almost nothing else in common: different skill requirements, different seniority expectations, and different salary ceilings. When you run those two roles through the same benchmark survey, you get an average that accurately describes neither.

The compensation benchmark problem is also partly a data source problem. Most widely-cited salary reports draw from samples of a few thousand survey respondents. The error margins on those numbers are real, and in a market where talent is concentrated in specific cities and roles, small sample sizes get noisy fast. According to Glassdoor data cited in a White House Council of Economic Advisers report, the average annual base salary for an AI Engineer as of June 2024 was $204,000, compared to $92,000 for a Computer Engineer, but that average collapses the enormous range that exists between a junior AI developer in Austin and a principal research scientist in Palo Alto.

This is why the salary benchmarks in this report are built differently. Rather than surveys, they are derived from live job postings tracked through JobsPikr’s data pipeline, over 100 million postings, updated in real time. What companies are actively advertising in the market today is a more honest signal of what they are willing to pay than what HR leaders remember reporting on a survey last quarter.

Stop Setting Salary Bands Based on Last Year’s Numbers

Live market data means better offers, fewer declines, and stronger retention.

What “AI Talent” Actually Means in 2026: The Roles You’re Benchmarking

Before we get into the numbers, it is worth pausing on the phrase “AI talent” for a moment, because it means very different things depending on who is using it. A recruiter sourcing for a fintech firm, a workforce planner at a hospital, and a comp analyst at a hyperscaler are all hunting for “AI talent,” and they might be talking about completely different roles with completely different salary ceilings. If your compensation benchmarks do not start with clear role definitions, you are comparing apples to aircraft carriers.

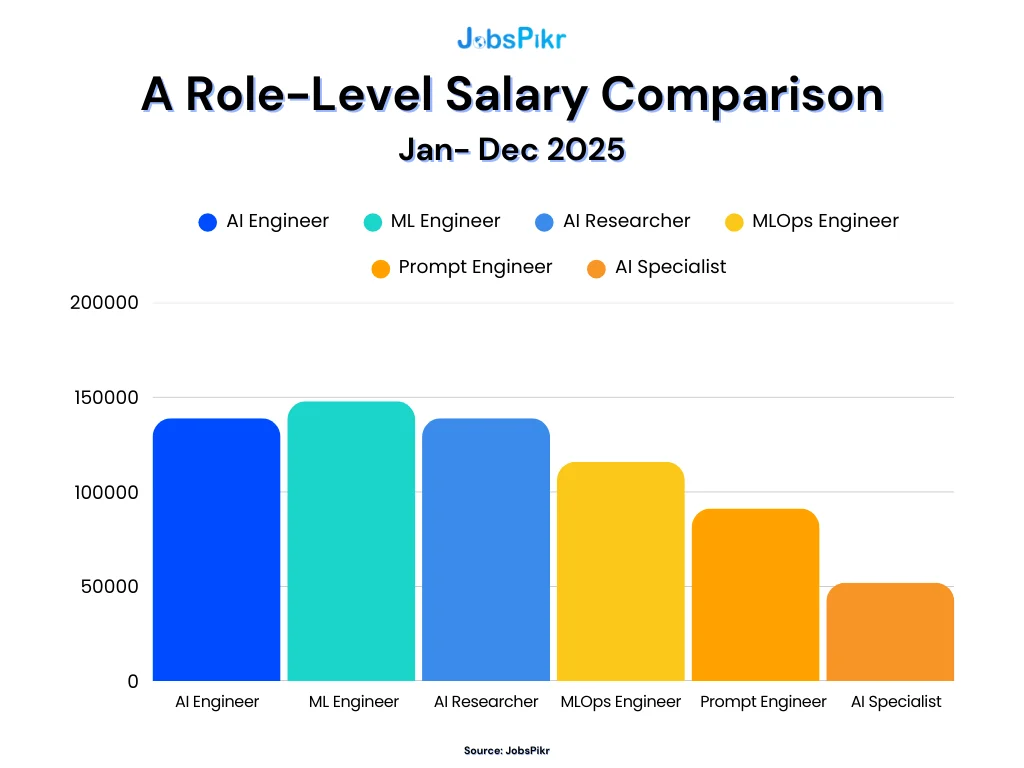

As per the Interview Guys, AI/ML Engineer titles represent 45% of all AI/ML job titles tracked in compensation benchmarking databases, with Senior AI/ML Engineer accounting for a further 15%. But even within that dominant category, the actual scope of work varies enormously. Here is how the key roles break down in 2026, and why each one needs its own salary benchmark.

AI Engineer

This is currently the most in-demand role in the AI talent market, and also the most broadly defined. At its core, AI engineering is about making machine learning models work in the real world, deploying models to production, and ensuring applications remain reliable, scalable, and integrated. Think of AI engineers as the people who take something that works in a research environment and make it work at scale for real users. In 2026, this role has expanded significantly to include building retrieval-augmented generation pipelines, working with vector databases, and integrating large language models into products. The scope is wide, which is exactly why salary ranges for this title tend to be the widest of all the AI roles.

Machine Learning Engineer

ML engineers sit slightly closer to the algorithmic and modeling side of the work. They design, train, and deploy ML models to solve business problems, and the field is increasingly considered a subset of software engineering, as the availability of pretrained models has made AI more accessible. Where an AI engineer might be integrating models into a product workflow, an ML engineer is more likely to be the person who built and validated the model itself. These roles command strong compensation premiums, particularly in data-heavy enterprise environments like finance and insurance, where model performance directly affects revenue.

AI Researcher

This is the highest-ceiling role in the AI talent market, and also the hardest to hire for. AI research scientists push the boundaries of what AI can do through novel research into algorithms, models, and architectures, and the average salary range for this role sits between $155,000 and $238,000 per year. Most companies pursuing genuine AI research at this level are competing directly with labs like OpenAI, Google DeepMind, and Meta AI for a very thin pool of people, most of whom hold PhDs in machine learning or computer science. For enterprise talent teams, the realistic question is whether you need a researcher or a well-resourced ML engineer; the comp difference is significant, and the supply difference is even more so.

MLOps Engineer

Often underrepresented in salary benchmark conversations, MLOps engineers are the people who keep AI systems running reliably after they are deployed. They manage the infrastructure, monitoring, and pipelines that ML teams depend on. As per Keller Executive Search, demand for this role has been growing steadily as companies move from “we built an AI system” to “we need our AI system to actually perform consistently in production.” AI/Machine Learning Engineer roles are experiencing the fastest growth among all AI job titles, with a 41.8% increase year-over-year as of Q1 2025, and MLOps roles are a meaningful share of that growth.

Prompt Engineer / AI Specialist

This is the newest entry in the AI role taxonomy, and the one that generates the most confusion in compensation benchmarking. The global prompt engineering market is projected to grow at a compound annual growth rate of nearly 33% from 2024 to 2030. In practice, “prompt engineer” covers a wide range of actual work, from highly technical LLM fine-tuning to more workflow-oriented roles that sit closer to product or operations. Before benchmarking this role, it is worth being very specific about what the job requires, because the salary range reflects that spectrum.

Why Role Clarity Matters for Salary Benchmarking

The reason this taxonomy matters for compensation strategy is simple. The industry is moving so fast that these titles change definition every quarter, and companies now put “AI” in job descriptions to make roles seem more prestigious even when the actual work is more basic. If your comp team is benchmarking an “AI Engineer” role against a broad dataset that mixes research scientists, MLOps engineers, and prompt specialists into the same bucket, the resulting number is not useful for anyone. It is too high to reflect junior hybrid roles and too low to compete for senior research talent.

The salary benchmarks in the sections that follow break this out by role, where the job posting data is clear enough to draw clean distinctions, which is one advantage of working from live postings rather than survey averages.

Free AI Salary Range Benchmark Calculator

Plug in your roles, regions, and internal salaries and instantly see how your compensation stacks up against live market data, where your pay gaps are, and how your ranges hold across spread assumptions.

2026 AI Salary Benchmarks by Role: What the Job Market Is Actually Paying

Let’s get into the numbers. And before we do, one thing worth flagging: the figures in this section are not pulled from a survey of HR managers. They come from live job postings tracked through JobsPikr’s data pipeline. What you are looking at is what companies are actively advertising which is a more direct signal of real compensation intent than self-reported data.

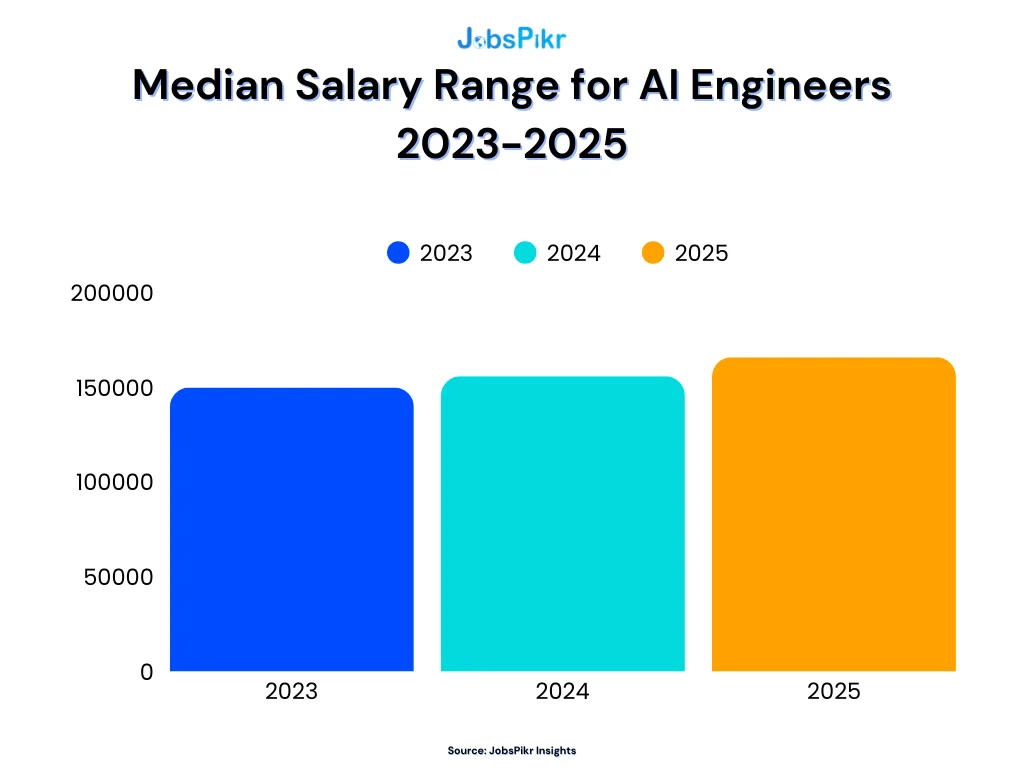

The Three-Year Salary Trajectory for AI Engineers

The first thing the data makes clear is that AI engineer compensation has not been sitting still.

The upward movement in the JobsPikr dataset from 2023 through 2025 is consistent and significant. The median offered salary for AI engineers has climbed steadily across all three years, reflecting a market where demand kept outpacing available supply. This is not a blip. It is a multi-year compression of comp bands pushing upward.

External data tells the same story from a different angle. AI engineer salaries jumped to an average of $206,000 in 2025, a $50,000 increase from the previous year, as per Second Talent. That kind of year-over-year movement is unusual even by tech compensation standards. It reflects how quickly companies recalibrated their salary benchmarks once they started competing seriously for the same narrow pool of people.

What the chart also shows, if you look at the rate of growth, is that the pace has been accelerating. The jump from 2024 to 2025 is steeper than the jump from 2023 to 2024. That is the competitive dynamic compounding more companies needing AI capability, not enough engineers to fill the demand, and offers moving up accordingly.

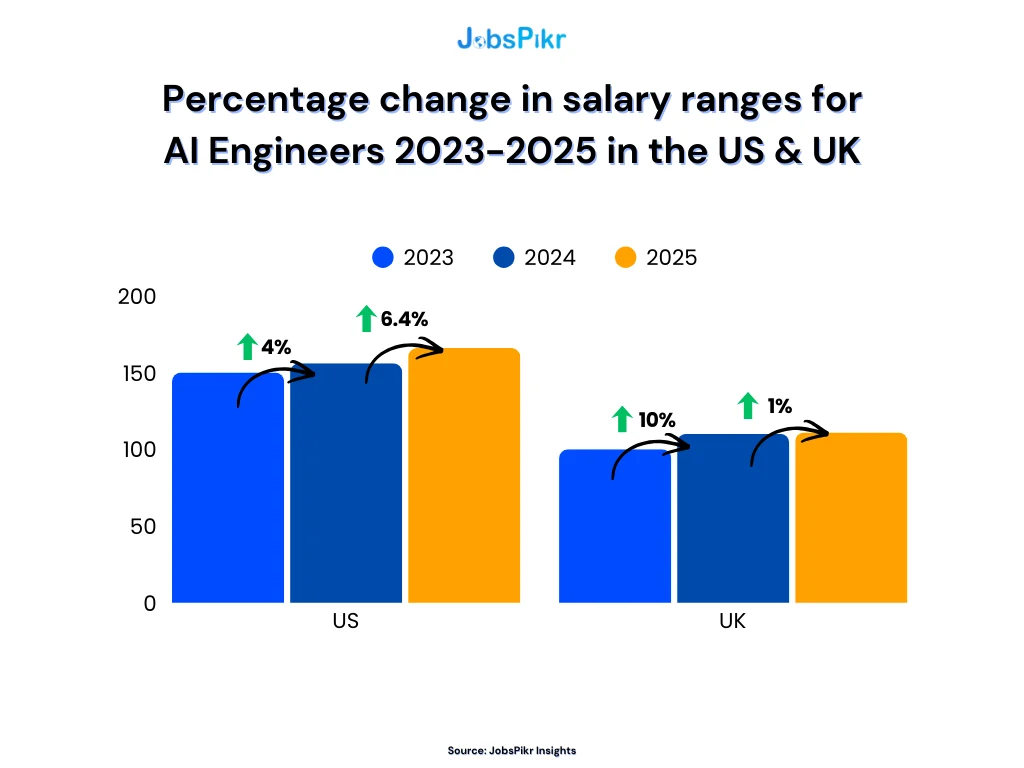

How Salary Growth Looks Differently in the US vs. the UK

The country-level picture adds important nuance, because the headline global number obscures some meaningful regional patterns.

In the US, salary growth for AI engineers has been steady and compounding 4% from 2023 to 2024, then accelerating to 6.4% into 2025. That is a market that is heating up, not cooling. The UK, by contrast, had a sharper initial jump of 10% into 2024, followed by a much flatter 1% increase into 2025. Two very different stories for the same role.

What explains the UK slowdown? A few things are likely at play. The initial surge in 2023–2024 brought UK AI compensation closer to parity with the broader European market faster than expected, which reduced some of the pressure to keep rising. Hiring also shifted partly toward contract and remote arrangements, which can dampen advertised salary growth in the full-time postings data. But the underlying demand has not disappeared; it has just settled into a different phase.

For compensation teams managing offers across both markets, this divergence matters. A comp band built on the assumption that US and UK AI salaries move in sync will be miscalibrated in at least one of those markets at any given time.

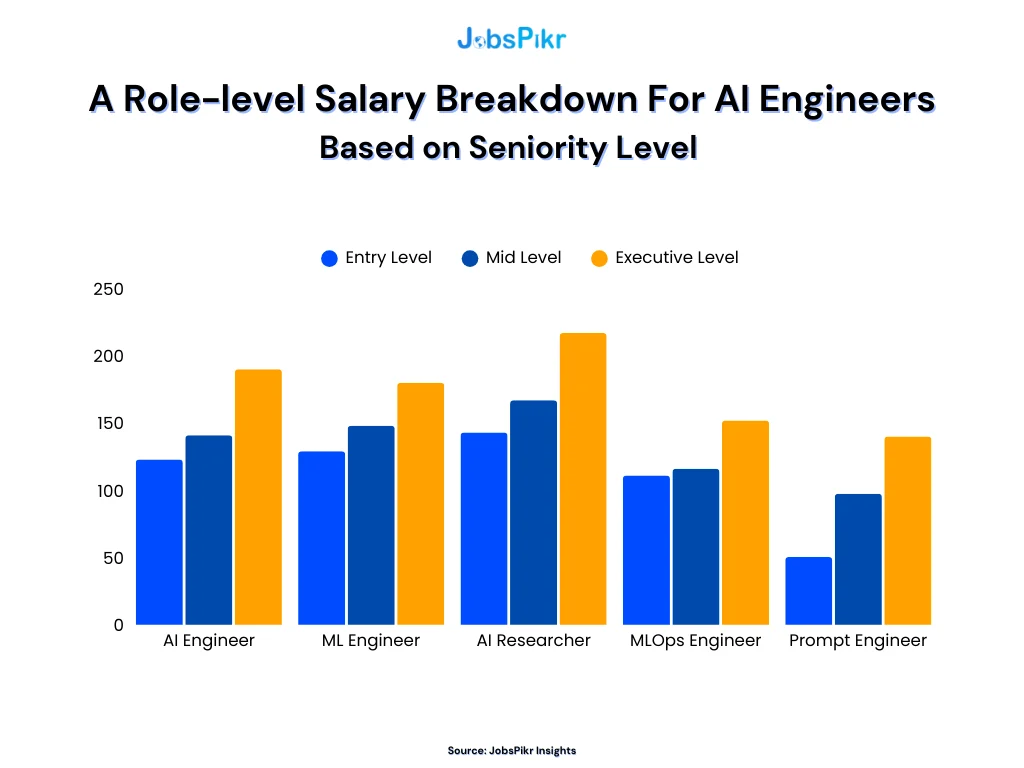

What Seniority Does to AI Salaries

The role-level picture gets even more interesting when you factor in seniority, and this is where the difference between a rough benchmark and a usable one becomes most visible.

At the staff engineer level, AI specialists earned 18.7% more than their non-AI peers in 2025, up from 15.8% in 2024, showing that the premium for experienced AI talent is widening, not narrowing, at senior levels. At the entry level, the gap is smaller and appears to be stabilizing. That pattern tells you something important about where the real supply constraint sits. Junior AI roles are getting easier to fill as more graduates enter the market with relevant training. Senior and staff-level AI roles remain genuinely scarce, and the comp premium reflects it.

The Remote Work Premium Hidden in AI Salary Data

One factor that does not always surface clearly in salary benchmarks is the remote work adjustment, and in AI roles, it is not a small number. A 2025 Dice analysis found that remote software engineers earn roughly 22% more on average than office-based roles, and the same dynamic applies to AI engineers, where the competition for niche skills means employers are broadening their hiring pools and competing across geographies rather than just within commuting distance of their offices.

For comp teams, this creates an important calibration question. If your salary benchmarks are built entirely from in-office or hybrid postings in a specific city, you may be systematically underestimating what fully remote offers look like in the same role. That gap can cost you candidates who are quietly running remote-first processes elsewhere.

Your Data Should Be as Smart as the Talent You’re Hiring For

Stop benchmarking AI salaries with data that’s already a year old. JobsPikr gives you live, role-specific compensation intelligence pulled from 100M+ job postings.

AI Salary Benchmarks by City: Where Pay Premiums Are Widening

The country-level numbers tell one story. The city-level data tells a much more useful story. Because the gap between what a company pays for an AI engineer in New York versus what the same company pays for the same role in Atlanta is not a rounding error, it is a strategic decision that compounds over time across your entire headcount.

The US City-Level Picture: What JobsPikr’s Data Shows

Starting with the country-level baseline, the US median sits significantly higher than both the UK and India for AI engineering roles. The UK comes in at roughly two-thirds of the US figure, while India is a fraction of that, which reflects not just cost-of-living differences but also the maturity of the AI product ecosystem in each market and the density of enterprise AI investment.

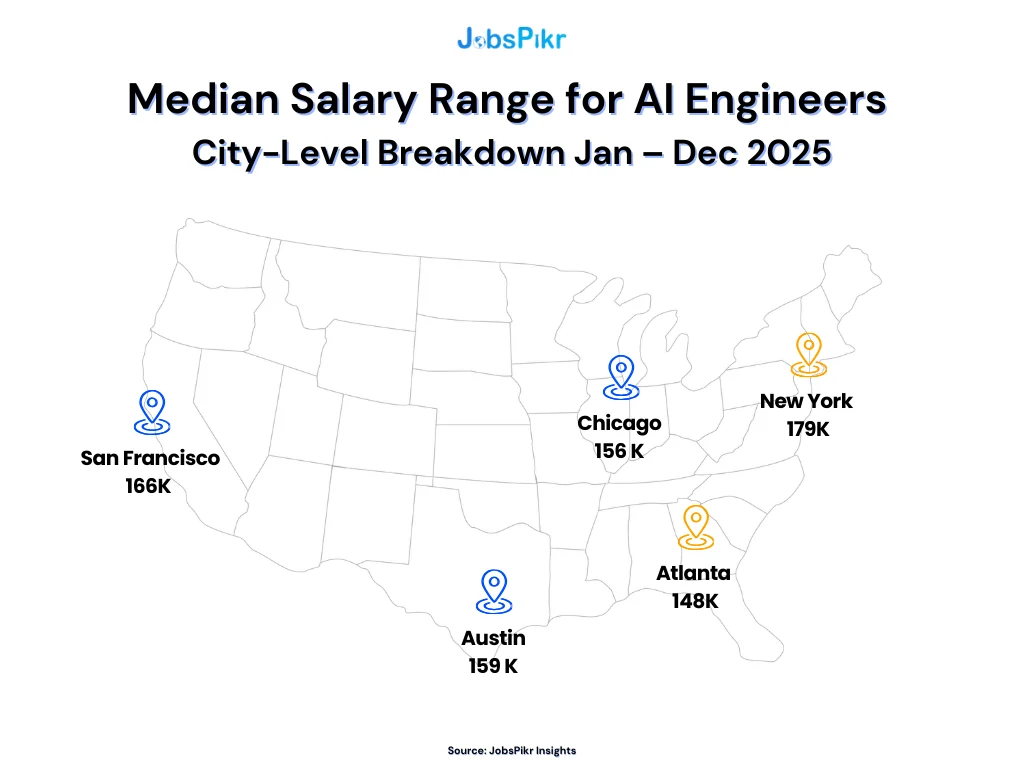

Within the US, the city-level breakdown from JobsPikr’s postings data reveals a $31,000 spread across just five major metros. New York leads at $179K, followed by San Francisco at $166K, Austin at $159K, Chicago at $156K, and Atlanta at $148K. That range across five cities, all of which are considered competitive, active AI hiring markets, tells you something important: your salary benchmarks need to be city-specific, not national averages.

Once you adjust for cost of living, the picture shifts considerably: Austin, Jacksonville, and Houston lead the country in real earnings power for AI professionals, outpacing coastal hubs despite lower nominal salaries. That is a genuinely useful signal for workforce planners weighing build vs. buy decisions in different geographies. New York and San Francisco look expensive on paper. Austin and Chicago can look like strong value when you factor in what engineers are actually taking home after rent.

Where the Salary Variance Gets Extreme

The city-level median is one thing. The salary range within a city is another and this is where compensation benchmarking often breaks down.

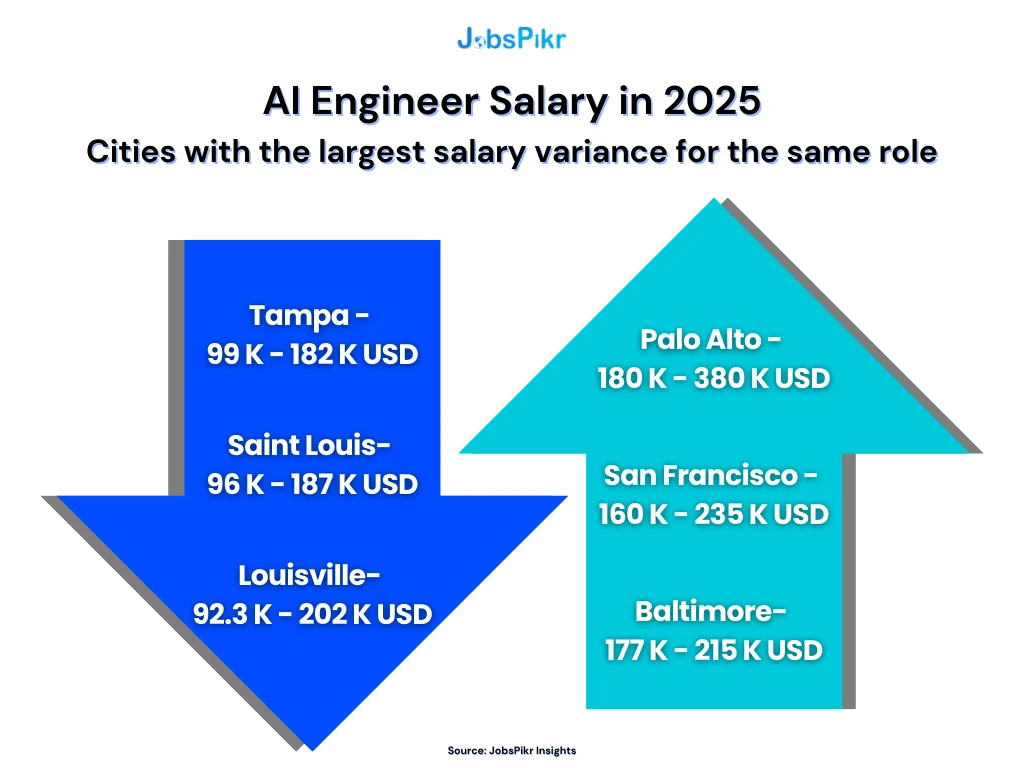

The variance data is striking. In Palo Alto, AI engineer salaries span from $180K to $380K for the same role title. San Francisco runs $160K to $235K. Baltimore, which does not get mentioned in most AI salary conversations, sits between $177K and $215K, a narrower band but a surprisingly high floor. On the lower end, Tampa ranges from $99K to $182K, Saint Louis from $96K to $187K, and Louisville from $92K to $202K.

What the wide bands in cities like Palo Alto tell you is that “AI Engineer” is doing a lot of heavy lifting as a job title. The $380K end of that range is almost certainly a staff or principal engineer at a major AI lab or hyperscaler. The $180K floor is likely a mid-level engineer at a growth-stage company. Both are called “AI Engineer” in the posting data. Senior AI engineers at major tech companies in the San Francisco Bay Area frequently report total compensation exceeding $300,000, while Austin, Denver, and other mid-tier cities offer somewhat lower base salaries offset by significantly lower costs of living.

For comp teams, this variance is the argument for why a single benchmark number per city is not enough. You need to know where within that range your role actually sits, and that depends on seniority, specialization, company stage, and whether you are competing against Big Tech or growth-stage startups for the same person.

What This Means If You Are Hiring Across Multiple Cities

If your team is actively hiring AI engineers in more than one US market simultaneously, you are essentially managing multiple compensation strategies at once. The mistake most companies make is applying a single national benchmark with a mild location adjustment, which undershoots in New York, overshoots in Atlanta, and satisfies no one completely.

Secondary tech markets like Austin, Denver, and Charlotte continue to offer strong AI compensation and growing opportunity pipelines, which makes them increasingly viable alternatives to the coastal hubs, particularly for companies that need scale without the Palo Alto price tag. The talent ecosystems in these cities are maturing, and the data shows it. You are no longer sacrificing quality to hire outside San Francisco. You are making a different trade-off, not a worse one.

The practical implication for workforce planning is straightforward. City-level salary benchmarks, refreshed from live postings rather than annual surveys, need to be a standard input in your offer approval workflow, not something you pull once a year and hope holds.

Free AI Salary Range Benchmark Calculator

Plug in your roles, regions, and internal salaries and instantly see how your compensation stacks up against live market data, where your pay gaps are, and how your ranges hold across spread assumptions.

AI Compensation Trends by Industry: Who’s Paying the Most for AI Talent?

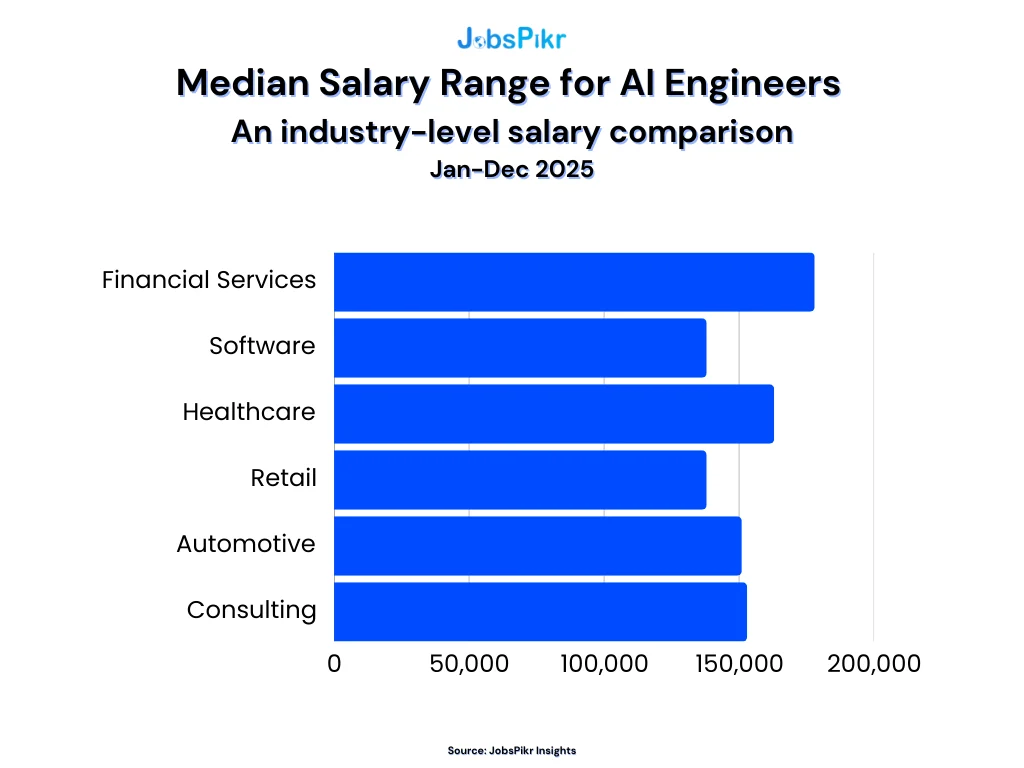

One of the most common mistakes comp teams make when running a compensation benchmark for AI roles is treating industry as a secondary variable. It is not. The industry your company sits in can shift the salary expectation for the same role by $40,000 to $80,000, sometimes more. Understanding where your organization sits in that spectrum is just as important as knowing the city-level numbers.

Over half of AI-related roles are now in non-tech industries, such as finance, healthcare, manufacturing, and others, meaning traditional companies that never used to hire AI experts are now offering highly competitive packages to do so. That broad adoption is part of what keeps pushing compensation higher across the board. Everyone is fishing in the same talent pool.

Finance and Fintech: The Highest Comp Floor Outside Big Tech

Finance has quietly become one of the most aggressive industries for AI talent compensation, and it has been for longer than most people acknowledge. The reason is straightforward: in finance, AI directly affects revenue. A better trading algorithm, a sharper fraud detection model, a more accurate risk assessment system, the financial upside of those improvements is immediate and measurable. That makes it easier to justify premium compensation.

Elite hedge funds have offered base salaries around $175,000 to $225,000 for AI engineering roles, with leads and researchers reaching $250,000 to $300,000 before bonuses. And that is just base. Performance bonuses in quantitative finance can add substantially on top of that. For AI talent with a background in ML and an interest in financial systems, the finance sector often competes with Big Tech on total compensation and sometimes wins. Firms like Jane Street have offered around $325,000 for new-graduate software and quant engineers, with mid-career AI quants earning $500,000 to $1,000,000 or more. Those numbers are at the end, but they reflect the ceiling that finance is willing to reach for the right people.

Technology: The Benchmark Everyone Else Is Measured Against

Big Tech sets the reference point that every other industry uses when justifying or defending their AI comp bands fairly or not. An AI research scientist or engineer at a FAANG company can easily see total compensation in the mid-to-high six figures annually, with mid to senior-level AI researchers often seeing total packages between $500,000 and $2,000,000 per year. Those are the outliers, but they shape candidate expectations for everyone downstream.

For companies that are not hyperscalers, the more relevant comparison is mid-market tech. Technology companies consistently offer the highest compensation overall, with financial services close behind, and both pay premium rates for specialized skills like fraud detection, algorithmic trading, and AI product management. The important nuance is that “tech” covers an enormous range from a Series A startup that is paying equity-heavy packages to a mid-sized software company that is closer to the national average. Your benchmark needs to reflect which tier you are competing in, not just the category.

Healthcare: Competitive and Growing Fast

Healthcare’s AI compensation story has changed considerably over the last two years. The industry has historically lagged tech and finance on cash compensation, but the gap has been narrowing as health systems, biotech firms, and diagnostics companies move AI from pilot to production. AI engineers in healthcare generally earn between $145,000 and $200,000 per year, putting this sector close to finance in terms of compensation, particularly as organizations recognize the value of MLOps expertise for deploying AI models in clinical settings.

The more interesting development in healthcare is the difference between large health systems, which still tend toward public-sector-adjacent pay scales, and private-sector healthtech companies. A biomedical AI engineer at a genomics startup in a major tech hub may be paid comparably to any other mid-level AI engineer at a software company. But an equivalent role at a non-profit hospital network will look quite different. When benchmarking healthcare AI roles, company type matters as much as industry category.

Retail and E-Commerce: Underestimated and Accelerating

Retail tends to get left out of AI salary benchmark conversations, but that is increasingly a mistake. AI applications in retail demand forecasting, personalization engines, inventory optimization, and dynamic pricing are not experimental anymore. They are operational. And companies that have invested seriously in AI infrastructure are paying to maintain it. Finance and healthcare pay 15 to 25% premiums for AI talent over the general market, but large e-commerce players are beginning to approach those same levels for roles where AI directly touches revenue and margin.

Government and Academia: The Floor of the Market

For completeness, it is worth naming the bottom of the industry compensation range. Government agencies and academic institutions pay the least for AI talent not because the work is less complex, but because the budget structures are different and the value capture is less direct. US assistant professors in AI-adjacent fields earn around $120,000 to $150,000 on a nine-month basis, while postdocs earn around $60,000 to $80,000. Government roles vary by agency and clearance level, but they consistently trail the private sector by a significant margin on cash compensation. The offset is usually stability, mission-driven work, and in some cases, lighter-than-industry working hours, but for pure salary benchmarking purposes, these are not the reference points most enterprise comp teams need to worry about.

What This Means for Your Compensation Strategy

The industry breakdown matters because it tells you something specific about the competitive set you are operating in. If you are a financial services firm benchmarking against general tech industry data, you are probably underestimating what you need to offer. If you are a healthcare organization benchmarking against hedge fund rates, you are likely creating comp bands that will never get approved and are not necessary to be competitive in your actual talent market.

Your Data Should Be as Smart as the Talent You’re Hiring For

Stop benchmarking AI salaries with data that’s already a year old. JobsPikr gives you live, role-specific compensation intelligence pulled from 100M+ job postings.

Why Live Job Posting Data Beats Annual Surveys for Salary Benchmarking

If you have made it this far through this report, one thing should already feel obvious: the salary benchmarks that help you make decisions are the ones built on current market signals, not historical ones. But it is worth being direct about why the traditional compensation benchmark process has such a hard time keeping up and what a live data approach changes in practice.

The Structural Problem with Annual Salary Surveys

Traditional compensation surveys follow a structured process where participating employers submit pay data through standardized templates, the survey firm validates and cleans submissions, and the results are packaged into reports. Most operate on annual or semiannual cycles, which means the data can be 12 to 18 months old by the time you apply it. In a stable market, that lag is manageable. In the AI talent market of 2024 and 2025, it is genuinely damaging.

That worked when labor markets were slower to change. But with accelerating demand for specialized skills like AI engineering or advanced data science, the time gap between locked-in salary surveys and real-time market shifts can be costly. By the time a survey that captured data in Q2 of last year gets published, purchased, and used by a comp team, the AI talent market has already moved through at least one or two more meaningful shifts in what companies are willing to offer.

There is also a participation problem that rarely gets discussed openly. Salary surveys rely on manual submissions, which makes the data error-prone and often sourced from legacy companies that rarely match your size, stage, or industry. If most of the companies contributing to a survey are large, established enterprises, the resulting benchmarks will systematically underrepresent what growth-stage companies and AI-native firms are paying, which happens to be the competitive set that matters most when you are hiring for AI roles.

What 100 Million Live Job Postings Tell You That a Survey Cannot

The fundamental advantage of pulling salary benchmarks from live job postings is not just freshness, though that matters enormously. It is the signal quality.

When a company posts a job with a salary range attached, that number reflects a real decision made by someone with a budget and a hiring deadline. It is not self-reported through a survey template. It is not averaged across companies of wildly different sizes and structures. It is what that employer, in that city, for that specific role, is willing to pay right now. Multiply that across 100 million postings and you get a compensation benchmark that is both current and grounded in actual hiring intent.

Real-time benchmarking is where organizations are increasingly heading, relying on live compensation data rather than annual surveys to set pay ranges because salary transparency, fair pay practices, and real-time benchmarking are no longer optional. They are essential to attracting and retaining top talent, and the pay transparency trend is accelerating this shift. Multiple US states have rolled out regulations requiring salary ranges in job postings, while in Europe, the EU’s Pay Transparency Directive is poised to standardize disclosures across the continent by 2026. More disclosed salary data in postings means more signal available for live benchmarking and less reason to depend on surveys that are always running one cycle behind.

How JobsPikr’s Salary Benchmarking Works in Practice

This is where the data you have been reading throughout this report comes from. JobsPikr tracks and structures job postings from across the web in real time, normalizing role titles, salary ranges, location data, and skill requirements into a consistent, queryable format. That means when a comp team wants to know what AI engineers are being offered in Austin right now not six months ago, the answer is already in the data.

The salary benchmarks it produces are not averages of self-reported numbers. They are derived from what employers are actively advertising, which means they reflect current market conditions rather than what HR leaders remembered reporting during a survey intake process last spring.

See exactly how it works in the demo below.

The Pay Transparency Tailwind Making Live Data More Powerful

One trend that is quietly making job-posting-derived salary benchmarks more reliable is the rapid expansion of pay transparency legislation. When companies are required by law to include salary ranges in postings, the quality and coverage of the underlying data improve significantly. More than 68% of job postings in 2025 included salary ranges, up from just 45% in 2023. That is a meaningful increase in the share of postings that are useful for benchmarking purposes, and it is still growing.

For workforce planners, this trend has a direct practical benefit. As more companies disclose ranges in postings, the gap between what live posting data shows and what the “true” market rate is continues to close. The data gets better as transparency increases. Which means a platform that is already tracking postings at scale is only going to become more valuable as a benchmarking source, not less.

When to Use a Salary Calculator vs. Full Benchmarking Data

A salary calculator is a useful starting point when you need a quick directional number, checking whether a candidate’s expectation is roughly in range before you get deep into an interview process, for example. But for decisions that matter, setting comp bands, planning headcount budgets, adjusting offers in a competitive process, or auditing pay equity across a team, a calculator built on static inputs is not the right tool.

Full salary benchmarking from live postings gives you the distribution, not just the midpoint. It shows you the floor, the ceiling, and how the range shifts by city, seniority level, and industry. That is the difference between knowing the market exists somewhere around $160K and knowing that in Austin, the range runs $130K to $195K, that the 75th percentile is $172K, and that offers below $150K have been declining at an increasing rate over the last six months. One of those answers helps you build a comp strategy. The other just confirms you are in the right ballpark.

What 2026 AI Salary Benchmarks Mean for Your Compensation Strategy

Numbers without context are just noise. The data in this report only becomes useful when it connects to actual decisions on how you set comp bands, how you approach offers, how you plan headcount budgets, and how you avoid the retention problems that tend to show up quietly before they show up loudly. This section is about that connection.

Stop Treating AI Comp Bands Like They Age Well

The single most damaging assumption in enterprise compensation strategy right now is that a well-built comp band holds for 18 months. In most roles, that assumption is fine. In AI, it is how you lose candidates in the final round without understanding why.

AI and ML hiring grew 88% year-on-year in 2025, and the comp expectations attached to that demand moved at a similar pace. A successful AI compensation strategy needs constant re-evaluation, as what worked in 2023 or even 2025 may need meaningful adjustment by 2026. That is not a suggestion to rebuild your comp structure every quarter. It is an argument for building in a regular cadence of market checks using live labor market data, not annual survey refresh cycles.

The practical fix is straightforward: treat AI roles as a separate compensation review category with a shorter refresh cycle than the rest of your tech roles. Set a quarterly or bi-annual trigger to check live posting data for your key AI titles in your key hiring markets. If the market has moved by more than 8 to 10 percent since your last band update, the band needs to move too.

The Internal Equity Problem Nobody Wants to Talk About

When companies start competing aggressively for AI talent, they often end up with a quiet but serious internal problem. If an AI researcher is making double what a senior engineer in another team makes, this causes real friction, and it is a retention risk not just for that senior engineer, but for the broader team around them.

This is one of the less-discussed costs of reactive AI hiring. When you chase market rates for new hires without auditing what your existing team is earning, you create compression that turns into turnover. The people who leave first are usually the ones who have been there longest and know the delta best.

The fix requires using your salary benchmarks proactively, not just reactively. Before you post a role, run your current team’s compensation against the same live market data you are using for the offer. If the gap is significant, address it before it becomes an exit conversation.

Build Flexibility Into How You Structure AI Compensation

The smarter approach is to build flexibility into your compensation through market premiums with review cycles, enhanced equity packages, and retention bonuses, rather than locking in permanent base salary increases you cannot walk back if the market cools. This matters especially in 2026, when the AI talent market is still hot but the pace of growth has started to differentiate by role and seniority.

What the hyperscalers are doing is instructive here, even if you cannot match their numbers. In August 2025, OpenAI offered retention bonuses ranging from $300,000 to $1.5 million for nearly 1,000 employees, with senior researchers able to choose between cash, equity, or hybrid structures explicitly to counter poaching from competitors. The structure of that offer is more useful to most enterprise comp teams than the numbers themselves. Hybrid structures that give employees optionality on how they receive value tend to outperform pure base salary increases for retention, because they signal flexibility and trust alongside the financial commitment.

For companies that are not competing at OpenAI scale, the same principle applies at a more grounded level. A well-structured equity refresh, a clear performance bonus tied to AI project milestones, or a retention bonus with a reasonable vesting window can often close a competitive gap more efficiently than a flat base salary increase, and it gives you more room to adjust if market conditions shift.

The Warning Signs That Your Comp Data Is Already Stale

There are a few practical signals that tell you your salary benchmarks are no longer working, and they tend to show up before your ATS data makes them obvious.

The first is offer decline rates creeping up without clear candidate feedback about why. When candidates get to the offer stage and decline, comp is often the real reason but it is rarely the stated one. If your offer acceptance rate for AI roles has dropped over the last two quarters, the benchmark you are using to set those offers deserves a hard look.

The second is time-to-fill stretching for AI roles specifically, even when your sourcing volume has not changed. A longer funnel often means candidates are running parallel processes and accepting faster elsewhere, usually because another offer came in higher.

The third is when your own AI team starts talking about what their network is being offered elsewhere. If you have hired well, your AI engineers have strong networks and will know when the market has moved. They can alert you when colleagues elsewhere are getting better deals, or when they themselves are being headhunted. That conversation, when it starts happening, is a direct signal that your benchmarks need refreshing.

What Good Salary Benchmarking Actually Looks Like in Practice

Good salary benchmarking for AI roles in 2026 is not a once-a-year exercise. It is a workflow. It starts with live postings data segmented by role, city, seniority, and industry. It feeds into comp band reviews on a regular cycle. It informs offer approval processes so that exceptions have data behind them rather than gut instinct. And it connects to retention analysis so you can see whether your current team’s compensation is drifting out of market before it shows up as turnover.

Pay equity and transparency are top priorities for global employers in 2026, with the EU Pay Transparency Directive being transposed into national laws and tighter regulations emerging in Canada, Australia, and the US. That external pressure is not just a compliance issue. It is an accelerant for building better benchmarking infrastructure, because the same data discipline that keeps you compliant also keeps your offers competitive.

This is the shift that separates organizations that consistently hire and retain AI talent from those that are perpetually in catch-up mode. The companies that treat salary benchmarking as a live, ongoing function rather than an annual report they pull from a vendor are the ones whose offers land, whose teams stay, and whose AI roadmaps execute.

Free AI Salary Range Benchmark Calculator

Plug in your roles, regions, and internal salaries and instantly see how your compensation stacks up against live market data, where your pay gaps are, and how your ranges hold across spread assumptions.

The AI Talent Market Is Not Slowing Down, But Your Benchmarks Might Be

If there is one thing this data makes clear, it is that AI compensation is not stabilizing anytime soon. Salaries are climbing, the gap between cities is widening, industries are competing outside their usual talent pools, and the shelf life of a comp band built on last year’s survey data is getting shorter every quarter.

The companies that will hire and retain the best AI talent in 2026 treat compensation analytics as a live function, not a once-a-year report, and that shift shows up directly in offer acceptance rates and retention. They are the ones with the most accurate picture of what the market looks like right now by role, by city, by industry, and by seniority level. That accuracy is the difference between an offer that lands and one that gets declined for a reason the hiring team never quite pins down.

Salary benchmarking used to be something comp teams did once a year. In the AI talent market, that cadence is no longer enough. The data needs to be live, specific, and connected to real hiring decisions, not filed in a folder until next year’s planning cycle.

That is exactly what JobsPikr is built for. With over 100 million job postings tracked in real time, it gives talent acquisition leaders, workforce planners, and comp teams the signal they need to stay ahead of the market, which does not catch up to it.

Your Data Should Be as Smart as the Talent You’re Hiring For

Stop benchmarking AI salaries with data that’s already a year old. JobsPikr gives you live, role-specific compensation intelligence pulled from 100M+ job postings.

Frequently Asked Questions

1. What is the average AI engineer salary in 2026?

The median AI engineer salary in the United States sits at approximately $160,000 annually in 2026, but that number moves significantly based on location, seniority, and the specific nature of the role. In high-density AI markets like Palo Alto and New York, the ranges extend well beyond that with senior and staff-level engineers regularly seeing total compensation that includes base salaries north of $200,000 plus equity. Entry-level positions typically start between $70,000 and $120,000, while principal engineers and AI researchers at major tech firms can reach $300,000 and above on base alone. The most useful benchmark is not a single national average but a role-specific, city-specific range derived from live job postings, which is exactly what JobsPikr’s salary benchmarking data provides.

2. How do salary benchmarks for AI roles differ by city?

The city-level variation in AI compensation is one of the most practically useful and most underused inputs in enterprise comp strategy. Based on JobsPikr’s job postings data for January through December 2025, US city medians for AI engineers ranged from $148,000 in Atlanta to $179,000 in New York, with San Francisco at $166,000, Austin at $159,000, and Chicago at $156,000. Beyond the medians, the within-city variance is equally important: Palo Alto shows a range of $180,000 to $380,000 for the same role title, reflecting the wide seniority and company-type mix at the high end of the market. A national salary benchmark applied with a simple location adjustment will consistently miss in at least one direction in most cities.

3. What is the difference between a compensation benchmark and a salary survey?

A compensation benchmark is a data point derived from observed market activity, which employers are actively offering in job postings or paying in employment records. A salary survey is a structured collection of self-reported data from participating employers, usually run on an annual cycle. The practical difference is timeliness and signal quality. Surveys capture what companies reported paying at a specific point in the past, filtered through what survey respondents chose to include. Benchmarks derived from live job postings reflect current hiring intent, are not subject to participation bias, and update continuously as the market moves. In a fast-moving market like AI talent, that distinction matters enormously a survey from 12 months ago can be off by $30,000 or more for a role where compensation has been compounding upward.

4. Which industries are paying the most for AI talent in 2026?

Financial services and Big Tech set the upper bound of AI compensation across most role categories. Elite quantitative finance firms have advertised base salaries of $175,000 to $225,000 for AI engineering roles, with performance bonuses that can substantially exceed that figure. Large tech companies remain the reference point against which most other industries benchmark, with total compensation for senior AI researchers at hyperscalers routinely exceeding $500,000 when equity is included. Healthcare and biotech have been closing the gap steadily, with AI engineers in that sector now earning between $145,000 and $200,000 on base. Retail and e-commerce have also moved upward as AI applications in those environments shift from experimental to operational. Government and academic institutions remain at the lower end, though research-focused government roles with security clearance requirements can carry meaningful premiums.

5. How do I use a salary calculator for AI roles in my hiring strategy?

A salary calculator is a useful starting point for quick directional checks, confirming that a candidate’s expectations fall within a rough range before you invest heavily in the process, or giving a hiring manager a fast reference point mid-conversation. But it is not a substitute for full salary benchmarking when the decision matters. For setting comp bands, planning headcount budgets, running pay equity audits, or making offers in competitive processes, you need the distribution behind the number the floor, the ceiling, where the 75th percentile sits, and how that range has moved over the last six to twelve months. JobsPikr’s salary benchmarking tool gives you that full picture across roles, cities, and industries, built from live job postings rather than static calculator inputs. The demo linked in this article walks through exactly how that data works in practice.