AI Layoffs 2026: What the Data Actually Shows Before You Read On

The headlines are confident. The press releases are decisive. The data, pulled from 70,000+ live job posting sources, tells a different story.

This report uses real-time JobsPikr job posting intelligence to examine the AI layoff 2026 wave and what the data shows when you look past the press releases. Here’s what it found.

- The roles being cut were already declining. Customer service representative postings dropped 24.9% across 18 months before AI was widely cited as the cause. Companies are giving a single headline to a multi-year trend.

- AI enablement roles are surging at the same companies that are making cuts. AI Engineer postings grew 654% from H1 2024 to H2 2025. You don’t hire that aggressively to manage a technology that’s replacing people. You hire it because you need humans to run AI.

- The skills gap is three skills wide and trainable. Content writers already share 71% skill overlap with the AI Content Strategist roles, replacing them. The reskilling case is stronger than the redundancy case. Most companies haven’t run the numbers.

- 50% will quietly rehire. The geographic data shows it is already starting offshore.

- Most AI workforce decisions are the wrong type. There’s a measurable difference between cuts backed by data and cuts backed by investor pressure. This report shows you how to tell them apart.

All job posting data in this report is drawn from the JobsPikr platform, which aggregates and normalises listings across 70,000+ sources globally including job boards, company career pages, and staffing sites updated daily. Role-level trends, skill frequencies, salary ranges, and geographic breakdowns are derived from postings collected between H1 2024 and Q1 2026.

In 2025, 55,000 U.S. workers lost their jobs because their employers cited AI. Not because AI had proven it could do their jobs, but because their employers believed it eventually would. Twelve months later, 55% of those same employers reportedly regret the decision. Forrester predicts that half will quietly rehire often at lower salaries, often offshore.

The uncomfortable truth: most AI layoff 2026 decisions are being made on instinct, investor pressure, and headline anxiety. Very few are being made on actual labour market data. This report breaks down the ROI reality behind AI workforce cuts and shows what it looks like when talent intelligence teams get it right.

The AI Layoff Wave: What the Numbers Actually Show

In 2025, U.S. employers cut 1.17 million jobs, the highest total since the height of the COVID-19 pandemic. Of those, 55,000 were explicitly attributed to AI, according to Challenger, Gray & Christmas. That’s twelve times more than two years prior. By Q1 2026, tech layoffs alone had surpassed 45,000, with Amazon accounting for 52% of those cuts.

The scale is real. But scale alone doesn’t tell you the cause.

The AI layoffs of 2025 and early 2026 have been framed overwhelmingly as an efficiency story, companies streamlining headcount because AI has made certain roles redundant. That framing carries a financial incentive worth examining: AI-related stocks have driven approximately 75% of S&P 500 returns since the launch of ChatGPT. In that environment, attributing a layoff to AI isn’t just an operational decision. It’s a market signal. And companies know it.

The job posting data tells a more nuanced story.

“AI layoffs 2026 refers to the wave of workforce reductions made by U.S. and global employers in 2025 and into 2026 where artificial intelligence was cited as the primary cause. Approximately 55,000 U.S. jobs were attributed to AI in 2025 alone, twelve times the figure from two years prior.”

Challenger

Gray & Christmas.

The Roles Being Cut Were Already in Decline

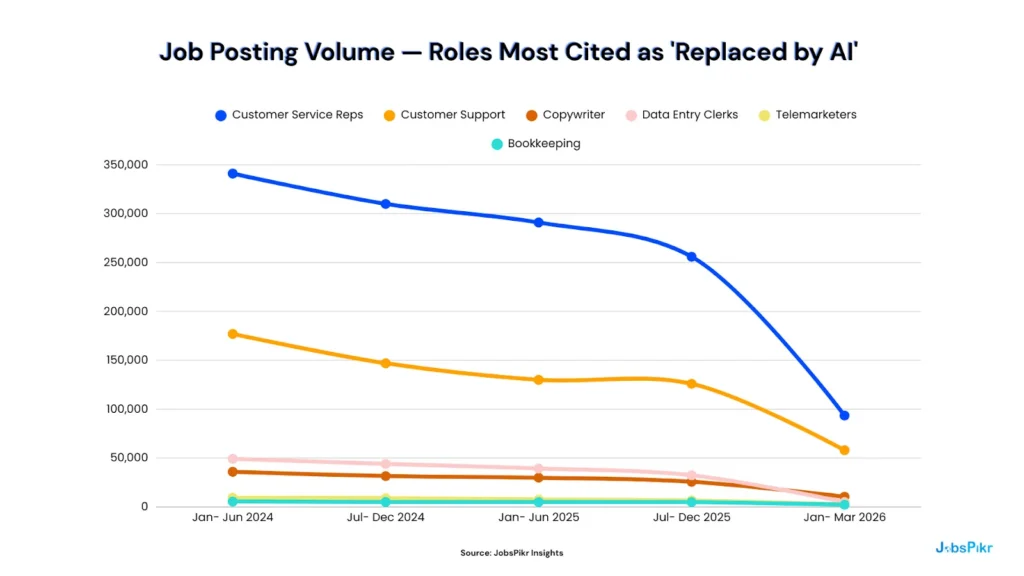

JobsPikr tracks job posting volumes across 70,000+ sources globally. When you plot the demand trend for the roles most commonly cited as AI casualties, a pattern emerges that predates the wave of AI layoff announcements in 2025 and 2026.

Job Posting Volume: Roles Most Cited as ‘Replaced by AI’

| Role | H1 2024 | H2 2024 | H1 2025 | H2 2025 | Q1 2026 | Decline H1 ’24–H2 ’25 |

| Customer Service Reps | 341,000 | 310,000 | 291,000 | 256,000 | 93,500 | ▼ –24.9% |

| Customer Support | 177,000 | 147,000 | 130,000 | 126,000 | 58,000 | ▼ –28.8% |

| Copywriter | 35,900 | 31,600 | 29,900 | 25,800 | 10,400 | ▼ –28.1% |

| Data Entry Clerks | 49,200 | 44,000 | 39,400 | 32,400 | 5,000 | ▼ –34.1% |

| Telemarketers | 9,290 | 8,990 | 7,720 | 6,570 | 2,610 | ▼ –29.3% |

| Basic Bookkeeping | 5,560 | 5,060 | 5,010 | 4,960 | 2,280 | ▼ –10.8% |

* Q1 2026 covers a 3-month period. Compare proportionally to the 6-month figures above.

The declines are real, but they are linear, consistent, and predate every major AI layoff announcement in the dataset. Customer service representative postings had already fallen 24.9% between H1 2024 and H2 2025 before a single press release cited AI as the reason. Data entry clerk postings had been falling in a straight line for six consecutive periods.

This is not an AI-driven cliff edge. It is a multi-year structural shift being given a single, convenient label in 2025 and 2026. Companies aren’t wrong that these roles are declining; they’re wrong about when it started, and wrong to imply the decision was triggered by a technology breakthrough rather than a trend already well underway.

The Sequencing Is the Story

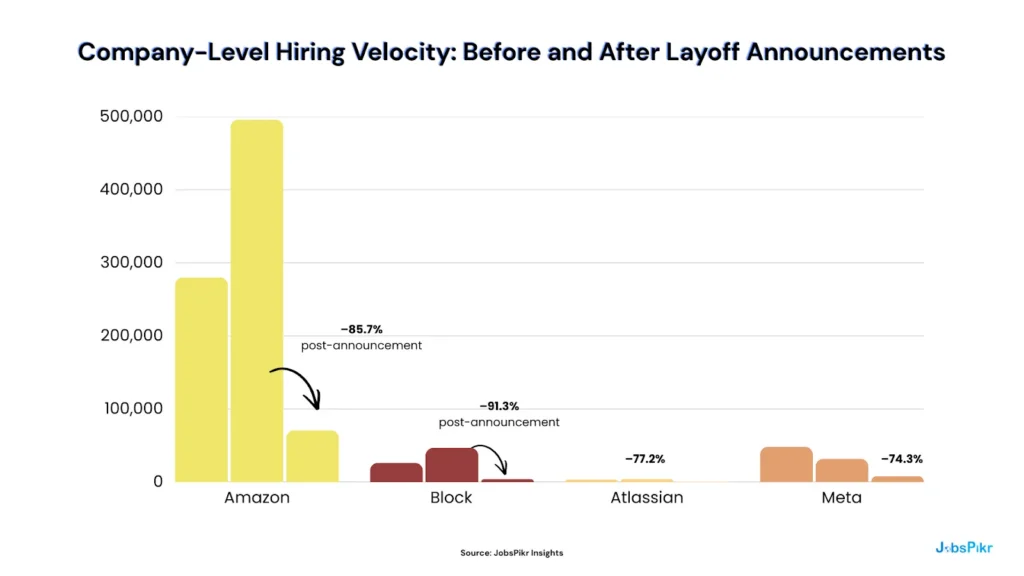

If AI productivity were genuinely driving headcount reductions, you’d expect to see job posting volumes fall gradually as AI tools replace functions in a smooth, capability-led contraction. What the company-level data actually shows is something different.

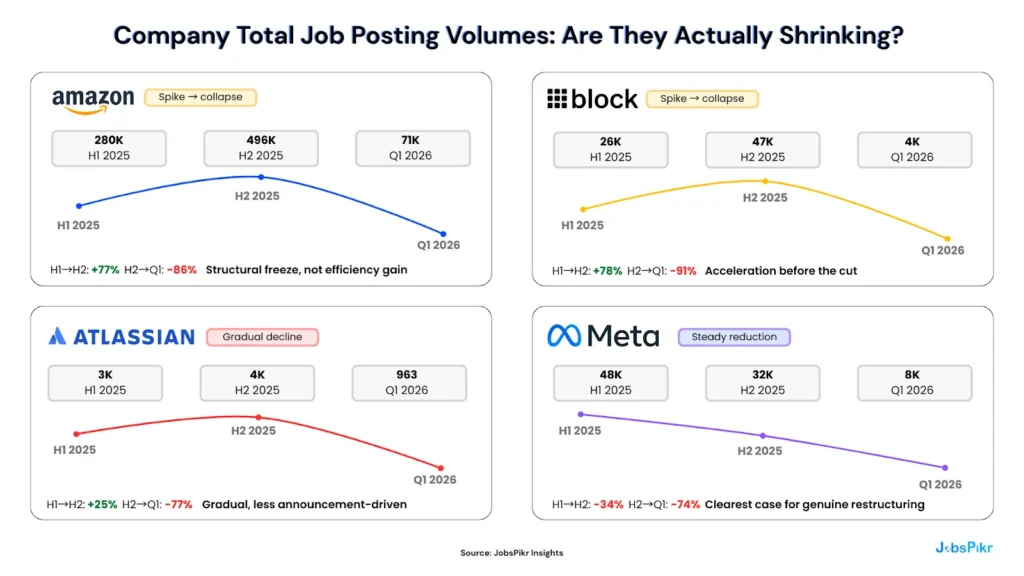

Amazon’s job postings surged to 496,000 in H2 2025, the highest volume in the dataset, before collapsing to 70,700 in Q1 2026 following its layoff announcement. Block followed an almost identical pattern: a hiring acceleration in H2 2025, then a 91.3% posting collapse in Q1 2026.

This is not the signature of AI gradually absorbing human functions. This is the signature of a hiring freeze following a strategic restructuring decision, one where AI was cited in the press release, but the job posting data doesn’t support the mechanism.

Meta is the exception worth noting: its postings declined consistently from H1 2025 onward without a pre-announcement spike, suggesting a more deliberate, ongoing reduction rather than a sudden cost-driven cut. The distinction matters when building a workforce intelligence case.

Where the Jobs Are Actually Going?

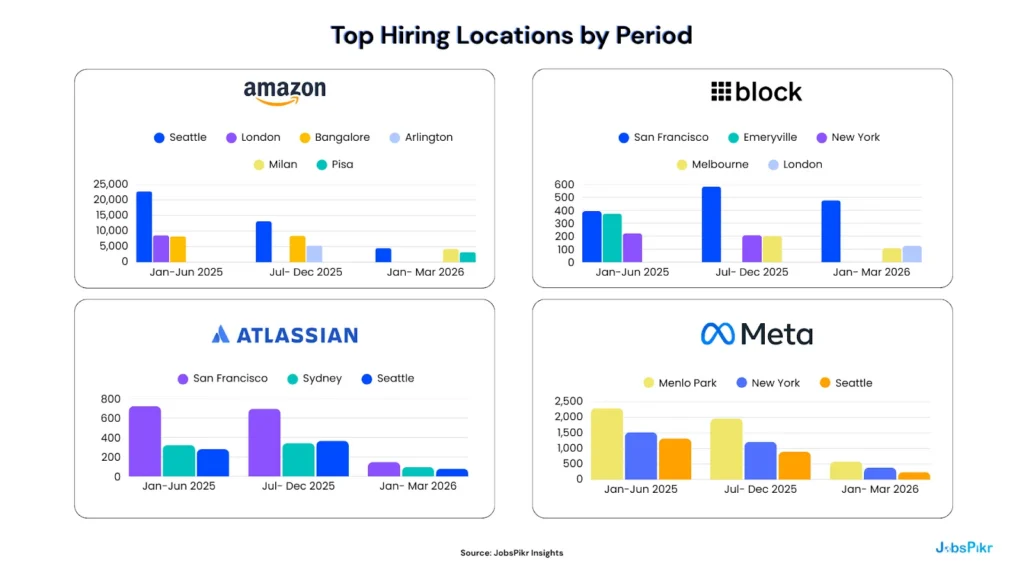

The geographic data adds a further dimension to the AI layoffs of 2026 that the headlines rarely capture.

Seattle’s Amazon headcount dropped from 22,700 postings in H1 2025 to 4,540 in Q1 2026, a fall of nearly 80%. In the same quarter, Milan and Pisa emerged as Amazon’s second and third largest hiring cities. Neither feature is in the AI efficiency narrative.

The same pattern appears at Block, where Melbourne sustained hiring as San Francisco contracted, and at Atlassian, where Sydney and Seattle declined in parallel, suggesting market-wide contraction rather than AI-specific role elimination.

This is the geographic arbitrage signal that the press releases don’t mention: U.S. roles are being cut, international postings are holding relatively firm, and the cost differential, not the AI capability, is doing much of the work.

What the Data Establishes: Before We Even Get to ROI

The AI layoff wave is real in scale. It is not as described. The roles being eliminated were structurally declining before AI was cited. The company posting volumes suggest strategic hiring freezes, not capability-led efficiency gains. And the geographic shifts suggest cost optimisation under a more flattering headline.

None of this means AI isn’t reshaping the workforce; it clearly is. But Section 2 asks the harder question: if AI productivity is generating the gains companies claim, where is the evidence in the data?

The ROI Reality: Productivity Gains Are Not Yet Structural

Here is the assumption at the centre of every AI layoff announcement made in 2025 and 2026: that AI has delivered or will imminently deliver productivity gains significant enough to permanently reduce the number of people a business needs to operate.

It is a reasonable hypothesis. It is not, in most cases, a proven one.

A 2025 MIT study found that 95% of enterprise AI pilot programmes failed to deliver measurable financial returns. McKinsey’s State of AI 2025 report found that only 6% of organisations qualify as genuine AI high performers, defined as those achieving 5% or more EBIT impact from AI deployment. Deloitte’s AI ROI Paradox report puts the typical payback period for AI investment at two to four years, compared to the seven to twelve months that technology investments have historically been expected to return.

And in a Harvard Business Review survey of 1,006 global executives conducted in December 2025, the finding was unambiguous: the vast majority of AI-attributed layoffs are anticipatory. They are based on what companies expect AI to deliver, not what it has already delivered.

Permanent workforce decisions are being made on the basis of a promise.

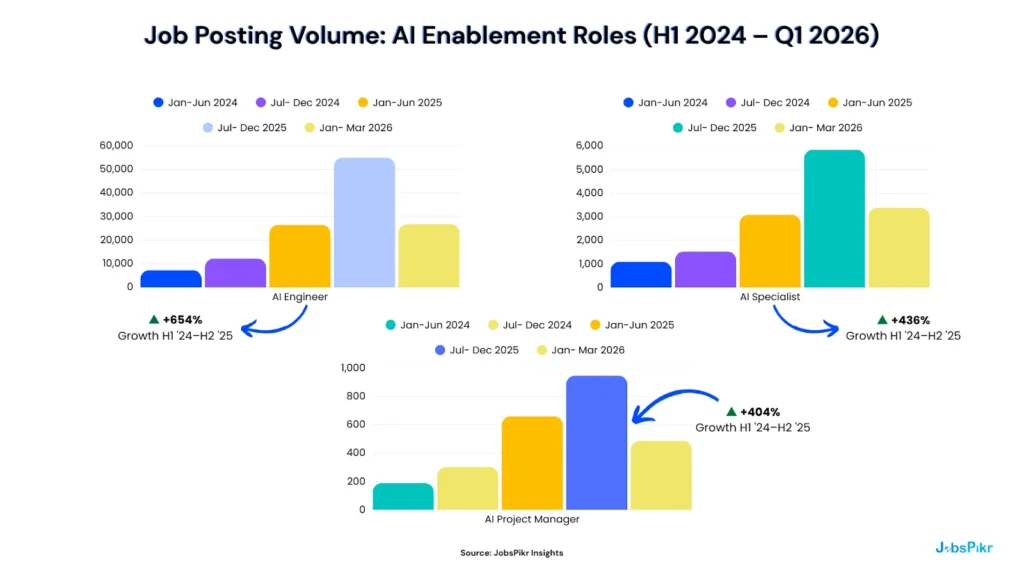

The Roles That Are Surging Tell You What AI Actually Needs

If AI were genuinely replacing workers at scale, absorbing functions, processing workflows, and making human labour structurally redundant, the demand for human AI oversight would be declining too. The opposite is happening.

AI Engineer postings grew 654% between H1 2024 and H2 2025. AI Specialist demand grew 436% over the same period. AI Project Manager postings grew 404%.

These are not peripheral roles. AI Engineers build and maintain the systems. AI Specialists configure and deploy them across business functions. AI Project Managers coordinate the humans required to make AI implementation work at an organisational level. All three roles exist because AI, at its current level of development, requires significant and ongoing human direction to produce reliable, usable output.

You do not hire this aggressively to manage a technology that runs itself. You hire this aggressively because the technology needs experienced people to run it, and experienced people are exactly what the AI layoff announcements are cutting.

The Restructuring vs. Reduction Question

A second test of the AI productivity thesis is whether companies citing AI gains are actually reducing total headcount or simply restructuring role mix while overall hiring volumes remain flat or grow.

Meta is the most credible AI efficiency case in this dataset: posting volumes declined steadily and consistently across the full period, without the pre-announcement hiring spike that characterises Amazon and Block. That pattern is consistent with a deliberate, data-informed workforce reduction, which this report will later define as a Type A decision.

Amazon and Block show the opposite signature. Both accelerated hiring in H2 2025, before posting volumes collapsed in Q1 2026. If AI productivity were the driver, the contraction would have been gradual and preceded by evidence of output maintenance at reduced headcount. What the data shows instead is a strategic pause a freeze following a decision made at the boardroom level, with AI cited as the mechanism in the accompanying press release.

The Workslop Problem

There is a further dimension to the ROI gap that the quarterly reports and analyst surveys don’t fully capture: the quality problem inside AI adoption itself.

Deloitte’s 2026 State of AI in Enterprise survey found that 74% of organisations hope to grow revenue through AI, but only 20% are already doing so. The gap between aspiration and realised return is not simply a matter of time. It is, in part, a product of what workforce researchers have begun calling “workslop,” the proliferation of fast but low-quality output generated by AI tools deployed under pressure to show adoption, without the human expertise required to validate or improve the result.

When experienced workers are cut before the AI capability that replaces them has been properly calibrated, the output quality problem compounds. The institutional knowledge needed to identify when an AI system is producing plausible but incorrect results, the human in the loop, is exactly the knowledge being eliminated.

The 6% of organisations McKinsey identifies as genuine AI high performers are not, in the main, the companies making the loudest AI layoff announcements. They are organisations that have invested in AI infrastructure alongside human capability, not instead of it.

What the ROI Data Establishes

The AI productivity story is not false. It is premature. The gains are real in pockets, concentrated in a small minority of organisations, and building toward a payback window that is measured in years rather than quarters. Making permanent, irreversible headcount decisions on the basis of a technology still in that window is not an efficiency strategy. It is a wager, and the job posting data shows that the odds are not as favourable as the press releases suggest.

Section 3 examines the human side of that wager: the workforce readiness gap that makes AI productivity gains even harder to realise than the ROI data already implies.

The Workforce Readiness Gap: You Can’t Automate What You Haven’t Trained

One of the quieter findings buried inside the AI layoffs 2026 data is this: the companies making the loudest cuts are often the least prepared to absorb the productivity gains they’re banking on.

Forrester’s 2026 workforce research found that only 16% of workers had high AI readiness, defined as the skills, fluency, and operational context to work effectively alongside AI tools in 2025. That figure is projected to reach just 25% by the end of 2026. Only 23% of AI decision-makers reported that their organisation had offered any prompt engineering training to employees in the past year.

This is the readiness paradox at the centre of the current wave of AI layoffs 2026: companies are eliminating the workers who hold institutional knowledge while simultaneously lacking the trained workforce needed to direct, validate, and quality-control the AI systems meant to replace them. The result is not an efficiency gain. It is an expertise vacuum dressed as one.

The Skills That Are Actually Surging

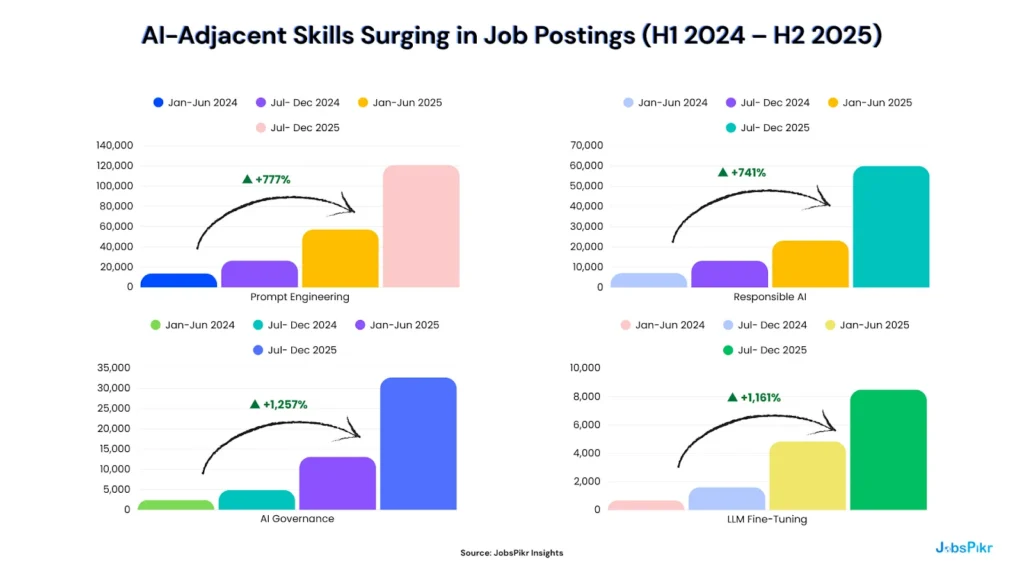

The job posting data makes the scale of the readiness gap visible in a way that surveys cannot. When you track which AI-adjacent skills are being demanded in job postings, not which skills companies say they value, but which skills they are actively hiring for, the growth curves are striking.

Prompt Engineering demand reached 121,000 job postings in H2 2025 alone, growing 777% across the 18 months. AI Governance grew 1,257%. LLM Fine-Tuning grew 1,161% from a low base to a meaningful scale.

These are not niche technical specialisms. Prompt Engineering is the skill that determines whether an AI system produces usable output or plausible-sounding noise. AI Governance is the organisational competency that ensures AI systems operate within legal, ethical, and operational boundaries. LLM Fine-Tuning is how companies customise foundation models for their specific business context, the difference between a generic AI tool and one that actually reflects how a company operates.

Every one of these skills requires experienced human practitioners. Every one of them is in short supply. And every major AI layoff announcement of 2025 and 2026 has been made by companies simultaneously posting aggressively for exactly these capabilities, often in the same quarter they let experienced generalists go.

Roles Are Being Augmented, Not Replaced

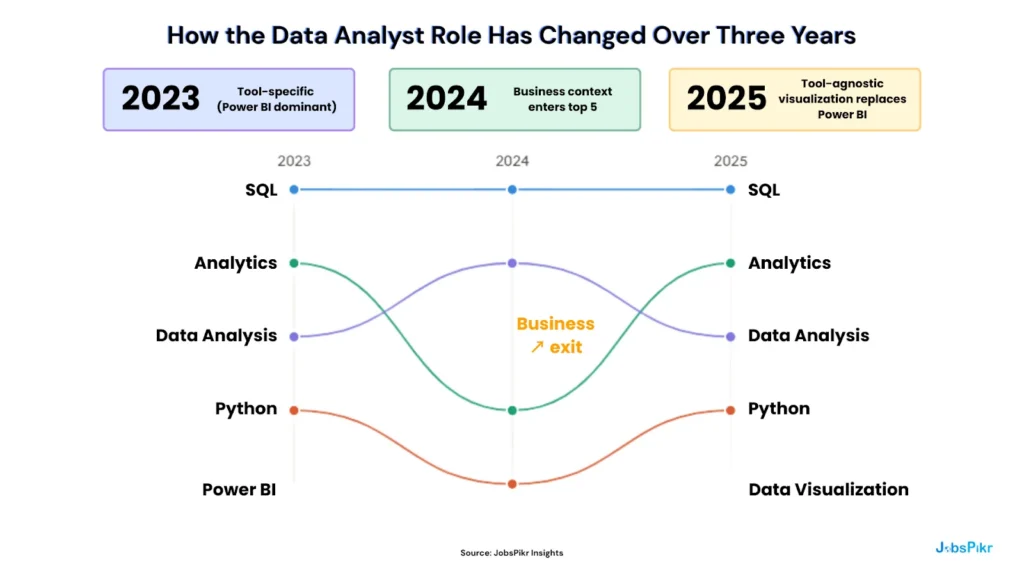

The second dimension of the readiness gap is a narrative one: the assumption that AI-era workforce restructuring means roles disappear, rather than roles evolve. The job posting data challenges that assumption directly.

The data analyst role has not been replaced by AI over this period. SQL and Python have remained in the top five required skills for three consecutive years. The meaningful shift is subtler and more instructive: tool-specific requirements like Power BI dropped out of the top five by 2025, replaced by the broader and more transferable “Data Visualization,” signalling that analysts are now expected to operate across AI-assisted tools rather than master a single platform. “Business” entered the top five in 2024 and held, reflecting an expectation that analysts drive decisions, not just produce reports.

This is augmentation, not replacement. The role exists. The work exists. The skill profile has shifted. Companies that understand this distinction are reskilling. Companies that don’t are cutting and will be rehiring in twelve months.

Who Is Actually Investing in Readiness

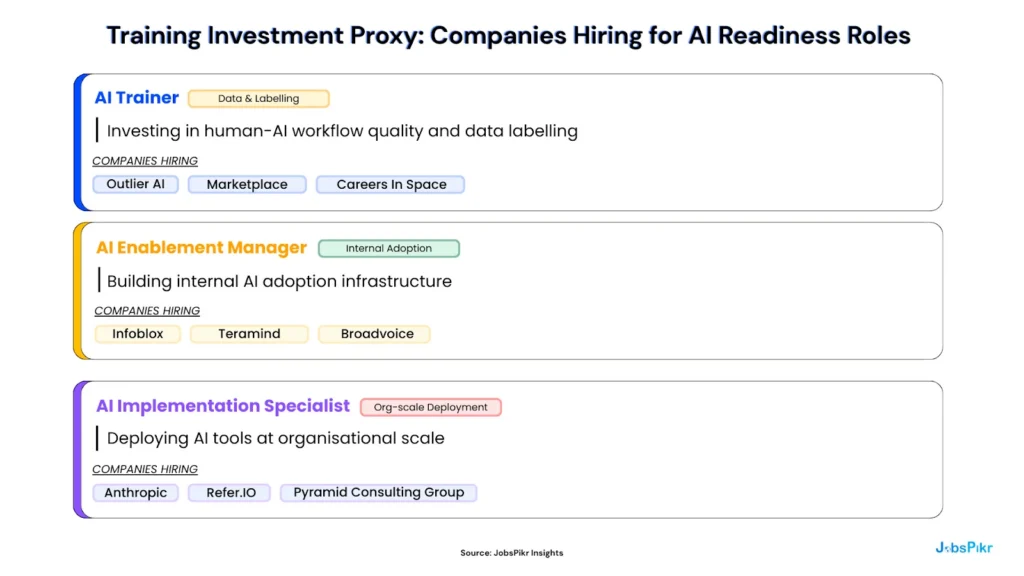

The most useful competitive intelligence signal in the readiness data is not a survey finding but the job posting itself. Companies actively building AI workforce capability are identifiable in real time, before their quarterly results or annual reports reveal whether the investment paid off.

Companies hiring AI Enablement Managers are building the internal infrastructure required to convert AI tools into measurable business outcomes. Companies hiring AI Implementation Specialists are doing the operational work of deploying AI across functions, the layer that sits between a software licence and a genuine productivity gain. Companies hiring AI Trainers are investing in the data quality and workflow design that determines whether an AI system improves over time.

Companies that are cutting without these hires are making the wager without laying the foundation. That distinction between organisations building AI readiness and organisations performing it is visible in job posting data before it shows up anywhere else.

The Two Groups Getting Hit Hardest

Two cohorts are bearing a disproportionate share of the readiness gap’s consequences.

The first is Gen Z. The entry-level roles that have historically served as the onramp to professional careers, junior analyst, support agent, copywriter, data entry specialist, are precisely the roles declining most sharply in the posting data. These positions are being eliminated before the AI-augmented versions of those roles have been properly designed or hired for. The cohort most digitally fluent with AI tools is being locked out of the labour market at the moment of entry.

The second is the experienced mid-career workforce. Forrester estimates that approximately 28% of the broader workforce is expected to disengage in 2026, not because they have been cut, but because they have watched colleagues cut for an AI capability that has not yet materialised and drawn the rational conclusion that loyalty and investment are no longer reciprocal. The coasters’ problem is not a motivation issue. It is a trust issue and it compounds the readiness gap further by eroding the institutional knowledge that makes AI deployment viable in the first place.

What the Readiness Data Establishes

The workforce readiness gap does not invalidate the AI transformation thesis. It contextualises it. AI will reshape the workforce the skills data makes that structural shift unmistakable. But the timeline, the sequencing, and the human investment required are all being underestimated by the companies making the most aggressive AI layoff decisions.

Section 4 examines what happens when that underestimation becomes visible, typically twelve months after the announcement, and usually in a job posting in a lower-cost city under a slightly different job title.

The Quiet Rehire: What Happens 12 Months After AI Layoffs 2026

Every AI layoff announcement comes with a version of the same statement: the company is becoming leaner, faster, and more AI-capable. The restructuring is permanent. The productivity gains will follow.

Forrester Research’s 2026 workforce predictions suggest that approximately 50% of those statements will be quietly walked back within twelve months not in a press release, but in a job posting. Half of all AI-attributed layoffs, Forrester projects, will result in rehiring for the same or equivalent functions. Often at lower salaries. Often in a different geography. And almost always under a different job title.

This is not a forecast about a future risk. The job posting data from AI layoffs 2026 suggests it is already underway.

The Klarna Lesson

Klarna became the most cited case study in the AI efficiency argument in 2024. The company announced that its AI system was doing the work of 700 customer service agents, processing errands in under two minutes compared to eleven minutes previously. The story was picked up globally as proof that AI-driven workforce reduction was not only viable but already delivering at scale.

By 2025, Klarna had begun rehiring customer service staff.

The specifics of the rehire were not announced with the same fanfare as the original efficiency claim. They rarely are. But the pattern Klarna established aggressive AI efficiency claim, followed by quiet capability gap recognition, followed by rehire, has since been documented across enough organisations that Forrester built it into their 2026 workforce predictions as a structural expectation rather than an outlier.

The reason is not that AI failed. It is that AI at its current level of development requires more human oversight, more contextual judgment, and more institutional knowledge than the initial productivity claims accounted for. The human layer was cut before its role in the system was properly understood.

What the Geographic Data Is Already Showing

The rehire signal doesn’t always look like a rehire. It often looks like a new hire in a different country, under a slightly different job title, at a meaningfully lower salary. The geographic posting data from Section 1 makes this pattern visible before it reaches the press cycle.

Recall the Amazon geographic breakdown:

Seattle’s Amazon headcount dropped from 22,700 postings in H1 2025 to 4,540 in Q1 2026. In the same quarter, Milan and Pisa emerged as Amazon’s second and third largest hiring cities — locations that carry significantly lower compensation costs than Seattle and that do not feature in the AI efficiency narrative accompanying the U.S. cuts.

This is the offshore arbitrage pattern that sits underneath a meaningful share of the AI layoffs 2026 wave. It is not AI replacing workers. It is workers in high-cost markets being replaced by workers in lower-cost markets, with AI cited as the mechanism because it carries a more favourable narrative and a more favourable market signal than straightforward cost reduction.

The same dynamic appears at Block, where Melbourne sustained hiring as San Francisco contracted. It appears at Atlassian, where the decline was proportionally consistent across all major cities, suggesting genuine contraction rather than geographic arbitrage, which is a meaningfully different signal worth distinguishing.

The True Cost of Getting It Wrong

The financial case for AI-driven layoffs typically accounts for the salary savings. It rarely accounts for what the cut actually costs.

The direct costs are measurable: rehiring fees typically run at 15–30% of a role’s annual salary. Onboarding and productivity ramp for a replacement hire averages three to six months. For senior or specialist roles, the full productivity recovery period can extend to twelve months or beyond.

The indirect costs are harder to quantify but more consequential. Institutional knowledge, the accumulated understanding of how a company’s systems, customers, and processes actually work, does not transfer to an AI model through a software deployment. It transfers through years of human experience, and it leaves when the person does. Organisations that cut experienced workers to fund AI investments often find that the AI systems they deploy are being directed by people who lack the context to know when the output is wrong.

Fifty-five percent of employers who made AI-attributed cuts in 2025 reportedly regret the decision, according to Forrester. That figure will be tested further as the 2026 rehire cycle develops. The companies that avoided it were not the ones that moved slowest on AI. They were the ones who looked at the data before they moved at all.

What the Quiet Rehire Establishes

The rehire cycle is not evidence that AI transformation is failing. It is evident that most organisations are sequencing it incorrectly, cutting before the capability is proven, before the workforce is ready, and before the institutional knowledge required to make the technology work has been properly transferred or preserved.

Section 5 gives talent intelligence teams and workforce strategists a practical framework for distinguishing the decisions that lead to this outcome from the ones that don’t and the job posting signals that make that distinction visible before the announcement, not after the regret.

The Two Types of AI Workforce Decision And How to Tell Them Apart

Not every AI layoff is the same decision. The data makes that clear. Meta’s posting volumes declined consistently and gradually across the full period tracked in this report. Amazon spiked before collapsing. Atlassian contracted across all geographies in parallel. Block accelerated into a freeze. Each pattern carries a different implication for the companies making the cut, for the talent intelligence teams advising on it, and for the competitors watching it happen.

The most useful framework for navigating the AI layoffs 2026 landscape is not a judgment about whether AI transformation is real. It is a distinction between two fundamentally different types of workforce decisions that are currently being described with the same language.

Type A: The Evidence-Led Decision

A Type A AI workforce decision is one where the data supports the cut before it is made. The role in question has been in structural decline as measured by job posting volumes for multiple consecutive periods. The skill functions it performed are being absorbed by a combination of AI tools and newly created hybrid roles that show up in the posting data alongside the decline. Total output is maintained or growing at reduced headcount, and the AI systems doing more of the work are supported by a growing investment in AI enablement roles, the engineers, specialists, and project managers who keep the technology functioning at an operational level.

Type A decisions are sustainable. They are also rare. McKinsey’s finding that only 6% of organisations qualify as genuine AI high performers, those achieving 5% or more EBIT impact from AI deployment, is a reasonable proxy for the proportion of AI-attributed layoffs that are genuinely Type A.

The role decline data from Section 1 contains elements of Type A evidence: data entry clerk postings fell 34.1% across 18 consecutive months in a straight, unbroken line. That is a structural signal. Customer service representative postings fell 24.9% across the same period with the same consistency. These roles are genuinely contracting, and the decline predates the AI narrative by enough time to suggest that technology-driven displacement, whether AI-specific or broader automation, is a real factor.

The distinction is not whether the role is declining. It is whether the organisation cutting it has the AI infrastructure, the trained workforce, and the new role creation pattern to demonstrate that output will be maintained without the headcount. Most of the companies making AI layoff announcements in 2025 and 2026 cannot show that evidence yet because the AI systems they are banking on have not yet delivered it.

Type B: The Speculative Decision

A Type B AI workforce decision is one made in anticipation of productivity gains that have not yet materialised. The cut frees up capital for AI investment, for margin improvement, and for a market signal that the company is AI-forward. The AI capability that will supposedly absorb the eliminated function is still in pilot phase, still being calibrated, or still being hired for.

Type B decisions are not necessarily dishonest. They may reflect a genuine belief that AI productivity gains are imminent and that acting ahead of them is strategically rational. But they carry a distinct risk profile: the institutional knowledge leaves with the people, the AI systems are deployed without the human expertise needed to direct them effectively, and the output quality gap that follows creates the rehire pressure that Forrester has documented across the 2025–2026 wave.

The job posting signatures of a Type B decision are identifiable before the announcement becomes a press release. Amazon’s surge to 496,000 postings in H2 2025 before its Q1 2026 collapse is a Type B pattern acceleration followed by a strategic freeze, rather than a gradual capability-led contraction. Block’s 91.3% posting collapse in a single quarter tells the same story. The geographic shift to lower-cost cities in the same period as the headline cuts adds the cost-arbitrage dimension that completes the picture.

None of this requires insider knowledge. It requires the right data, read at the right cadence.

Type A vs. Type B: How to Tell AI Workforce Decisions Apart

| Signal | Type A: Evidence-Led | Type B: Speculative |

| What drives it | Proven productivity gains at reduced headcount | Anticipated gains that haven’t yet materialised |

| Role posting trend before the cut | Sustained linear decline across 6+ consecutive periods | Flat or growing volumes that collapse at or after announcement |

| Company example | Meta: steady, consistent posting decline from H1 2025 onward | Amazon: surged to 496,000 postings in H2 2025, collapsed to 70,700 in Q1 2026 |

| New role creation | Hybrid AI roles were created in parallel with the decline of legacy roles | Cuts made without corresponding AI enablement hiring |

| Geographic pattern | Contraction proportional across all major cities | U.S. roles cut while lower-cost international postings hold or grow |

| Institutional knowledge | Transferred or preserved before headcount reduction | Leaves with the people AI systems deployed without human direction |

| 12-month outcome | Sustainable efficiency gain | Quiet rehire often offshore, under a different job title |

| Proportion of 2025–26 cuts | ~6% (McKinsey AI high performer benchmark) | ~94% of AI-attributed layoffs |

The Three Signals That Separate Them

For talent intelligence teams advising on workforce decisions or tracking competitors, making them three job posting signals distinguishes a Type A decision from a Type B one.

- Signal 1: Role posting velocity over 12–24 months.

A Type A decision is preceded by a sustained, linear decline in posting volume for the role in question, typically 6 or more consecutive periods of contraction, independent of broader market conditions. A Type B decision is preceded by flat or growing posting volumes that drop sharply at or immediately after the announcement. The Section 1 data gives clear examples of both: data entry clerk postings (Type A signature) vs. the company-level velocity patterns for Amazon and Block (Type B signature).

- Signal 2: New role creation ratio.

Type A transformations create new hybrid roles in parallel with the decline of legacy ones. AI Engineer, AI Specialist, and AI Project Manager postings grew 404–654% between H1 2024 and H2 2025, the period during which the same companies were beginning to reduce headcount in adjacent functions. If a company is cutting but not creating the hybrid roles that would absorb the function, that is a Type B signal, regardless of what the press release says.

- Signal 3: Competitor hiring posture.

If a company cuts its customer service team, citing AI efficiency gains, but four of its five direct competitors are maintaining or growing customer service posting volumes across the same period, that divergence is significant. Either the company has achieved a genuine productivity advantage that its competitors haven’t, which the ROI data suggests is unlikely for the majority of organisations, or it has made a speculative cut that its competitors judged too early to make. Tracking competitor posting velocity at the role level is one of the highest-value applications of real-time job posting intelligence, precisely because it converts a private organisational decision into a visible, trackable signal.

Why the Distinction Matters Now

The AI layoffs 2026 wave has created a competitive intelligence opportunity that most talent teams are not yet equipped to act on. The companies making Type B cuts are creating temporary talent surpluses in specific roles and geographies, experienced customer service professionals, copywriters, data entry specialists, and analysts that represent a real acquisition opportunity for competitors with the foresight to move during the disruption rather than after it.

The companies making Type A cuts are signalling something more durable: that specific functions are genuinely contracting in their sector, and that the hybrid roles absorbing them are where skill investment and hiring energy should be directed. Tracking which type of decision your competitors are making and distinguishing between the two in real time is the difference between a reactive talent strategy and an intelligence-led one.

Section 6 shows what that looks like in practice.

What Talent Intelligence Teams Do Differently

The difference between a Type A workforce decision and a Type B one is not access to better instincts. It is access to better data, read at a faster cadence than the organisations making the wrong call.

Talent intelligence teams using real-time job posting data are making workforce decisions six to twelve months ahead of those relying on lagging indicators, such as unemployment rates, quarterly salary surveys, and annual industry reports. By the time those sources confirm what the market is doing, the decision window has closed, the talent has moved, and the competitor has already acted.

The following four use cases illustrate what that intelligence advantage looks like in practice, built on the same JobsPikr data signals documented throughout this report.

Use Case 1: Real-Time Role Demand Dashboard: The Content Writer Decision

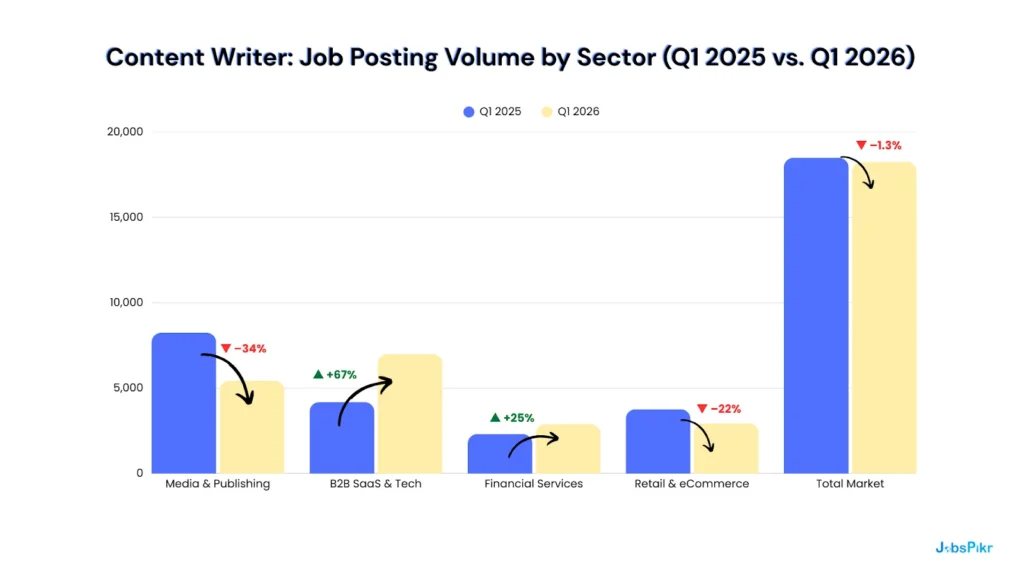

A talent intelligence lead at a mid-size B2B SaaS company is asked to support a workforce review. Leadership is considering eliminating the content writer function, citing AI writing tools as a productivity replacement. The request lands on a Monday. By Tuesday morning, the intelligence lead has pulled twelve months of job posting data for the role across sectors.

The total market figure, a flat 1.3% decline, is the number leadership has seen in a trade report. It looks like confirmation. The sector split is what changes the meeting.

Content writer demand in B2B SaaS grew 67% year on year. The role is not dying in the sector in which the company operates. It is accelerating. Competitors are not cutting content writers; they are hiring them, increasingly with AI tool proficiency built into the job description. The recommendation that comes out of the intelligence review is the opposite of the one that went in: the company should not be eliminating the function. It should be reskilling and competing harder for the people who can perform it well.

The decision changes because the data was sector-specific, not market-aggregate. That distinction is only visible if you are looking at the right level of granularity, at the right speed.

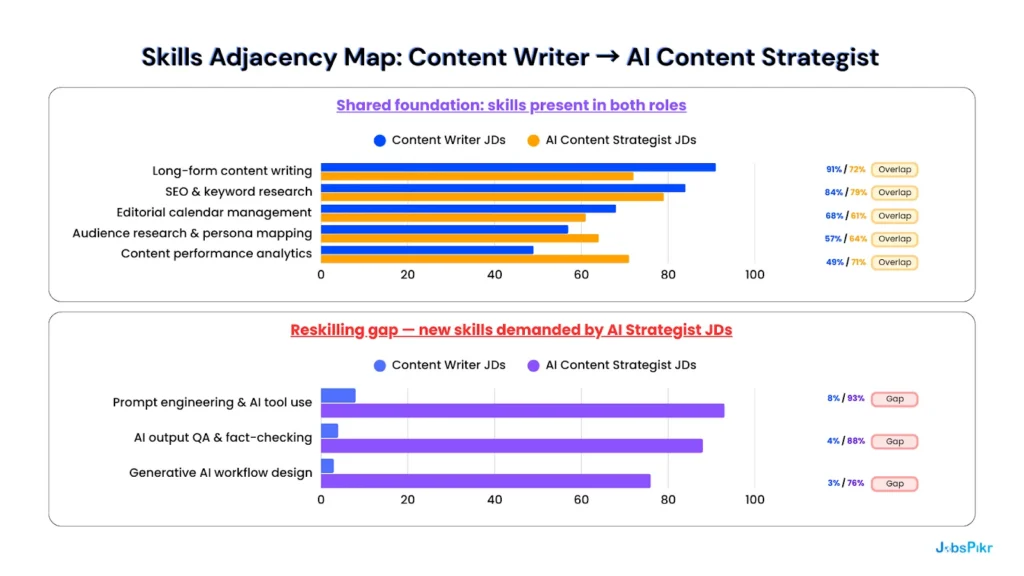

Use Case 2: Skills Adjacency Mapping: The Reskilling Case

The same intelligence lead is asked a follow-up question: if the company were to transition content writers toward more AI-integrated roles, what would the reskilling gap actually look like? The answer comes from comparing the skill requirements in current content writer job postings against those in AI Content Strategist postings, the role emerging as the successor function across the B2B SaaS sector.

The skill overlap score is 71%. A content writer already holds five of the eight core competencies required for the emerging role. The reskilling gap is three skills wide all of them learnable, all of them being addressed by the training investment proxy signals in Section 3 (AI Trainers, AI Enablement Managers, AI Implementation Specialists).

The redundancy case requires replacing five competencies alongside hiring for three new ones. The reskilling case requires training for three. The numbers make the argument before the meeting starts, but only if someone ran the analysis.

This is the reskilling business case, built on data rather than HR instinct. For the talent leaders navigating the AI layoffs 2026 environment, it is also a retention argument: experienced content writers who understand the brand, the audience, and the editorial standards are exactly the people who can QA AI output at the quality level the company needs. They are not redundant. They are under-trained.

Use Case 3: Salary Benchmarking: Pricing the Restructured Role

The intelligence lead’s third task is ensuring the newly restructured AI Content Strategist role is priced competitively before it goes to market. The company’s initial offer reflects internal compensation bands that haven’t been updated for the new role category. JobsPikr’s salary benchmarking pulls advertised compensation data across five direct competitors currently hiring for the equivalent role.

The company’s current offer sits 19% below the market median. At that gap, the role will lose candidates at the offer stage or lose new hires within six months to competitors who are already benchmarked correctly. The third failed search is avoidable. It requires knowing the market before the role goes live, not after the hiring manager asks why the pipeline keeps dropping off at the final stage.

JobsPikr surfaces this in the same workflow as the role demand and skills data. It is not a separate research project. It is the same intelligence layer, applied to a different decision point in the same process.

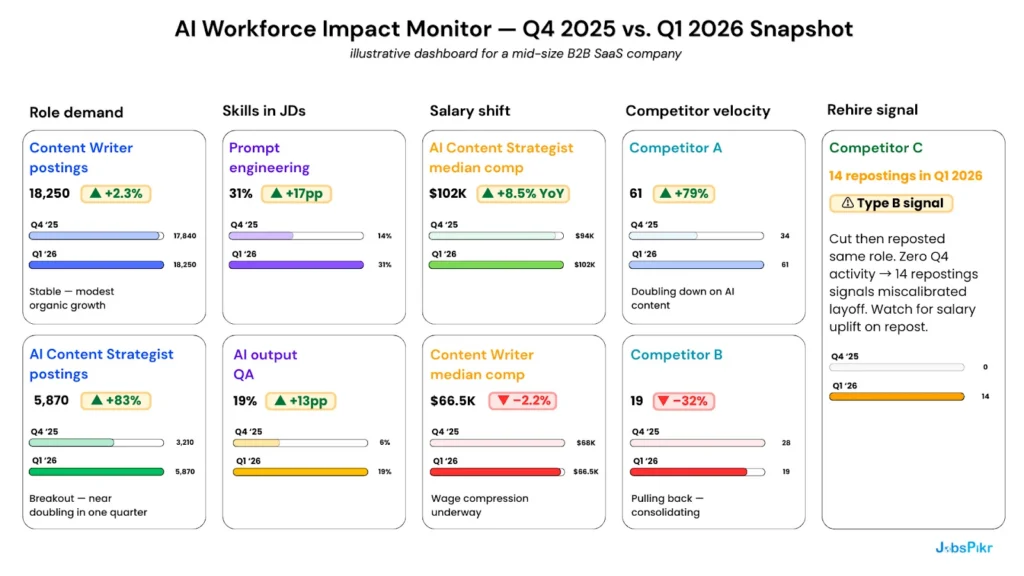

Use Case 4: The AI Workforce Impact Monitor: Quarterly Intelligence for the C-Suite

The three use cases above are decisions made at the role level. The AI Workforce Impact Monitor is what those decisions look like when they are aggregated into a standing intelligence view, a live dashboard updated quarterly, shared with the C-suite, and built entirely from JobsPikr job posting signals.

The monitor tells the C-suite three things in a single view that would otherwise require three separate research projects and a three-week lag.

- First: the market is moving toward hybrid AI roles at speed. AI Content Strategist demand grew 83% in a single quarter. The window to build this capability before it becomes expensive is narrowing.

- Second: competitor posture is diverging. Competitor A is doubling down on content, hiring a signal that its workforce strategy is moving toward augmentation rather than elimination. Competitor B is pulling back, either making a Type B cut or exiting the function. Competitor C cut and reposted the same role within the quarter, a textbook Type B rehire signal that is now visible before it hits the industry press.

- Third: the salary curve for the emerging role is rising fast. At +8.5% year on year for AI Content Strategist compensation, waiting another quarter to restructure means paying a premium to access the same talent the company could be reskilling internally right now.

This is what talent intelligence infrastructure looks like. Not a quarterly report from a research firm. Not an annual salary survey. A live signal layer updated in real time, built on job posting data, and calibrated to the specific competitive context the company operates in.

The organisations running this view are making workforce decisions six to twelve months ahead of the ones that aren’t. That gap is the intelligence advantage, and in an environment defined by AI layoffs 2026, it is the difference between a decision made on data and a decision made on instinct, announced in a press release, and quietly reversed twelve months later.

AI Layoffs 2026: The Right Question Isn’t ‘Will AI Take Your Jobs?

The wrong question has dominated the conversation around AI layoffs 2026 from the start. Whether AI will take jobs is not, at this point, a useful frame. The job posting data answers it plainly: some roles are in structural decline, the decline predates the AI narrative by 18 months in most cases, and the trajectory is unlikely to reverse. That part is settled.

The question that matters, the one the data in this report is actually built to answer, is different: are the decisions being made right now, in this window, based on what the evidence shows or on what the press release needs to say?

The organisations that will define the AI era are not the ones that moved fastest or announced most confidently. They are the ones who asked the harder question first. The ones whose talent intelligence teams pulled the role demand data before the headcount decision went to the board. The ones whose workforce strategists ran the skills adjacency map before the redundancy consultation began. The ones whose C-suite reviewed a live competitor hiring posture dashboard before assuming that what their peers were doing was either right or wrong.

Those organisations are not waiting for the next McKinsey report or the next Forrester prediction cycle to tell them what the market is doing. They are reading the market directly, in real time, through job postings, the only data source that reflects employer intent before it becomes a hiring decision, a layoff announcement, or a quietly reversed press release.

The AI transformation of the workforce is real. The timeline is longer, the readiness gap is wider, and the ROI is more concentrated than the headlines suggest. But the intelligence advantage available to the teams willing to look at the data is also larger than most organisations have yet recognised.

The question isn’t whether AI will reshape your workforce. It already is. The question is whether you’ll see it clearly enough, and early enough, to make decisions you won’t be reversing in twelve months.

JobsPikr gives you the intelligence layer to answer that question with confidence. Sign up now!

Want to run these checks on your own workforce decisions?

JobsPikr gives talent intelligence teams real-time visibility into job demand, skills shifts, and competitor hiring activity — across 70,000+ sources, updated daily.

References:

- Challenger, Gray & Christmas (2025). Job Cut Report — Full Year 2025. Retrieved via CNBC.

- Deloitte (2025). The AI ROI Paradox: Rising Investment and Elusive Returns.

- Deloitte (2026). State of AI in the Enterprise.

- Forrester (2026). The AI Layoff Trap: Why Half Will Be Quietly Rehired. Retrieved via HR Executive.

- Harvard Business Review (2026, January). Companies Are Laying Off Workers Because of AI’s Potential, Not Its Performance.

- IBM / Censuswide (2025). Race for ROI: Two-Thirds of Surveyed Enterprises in EMEA Report Significant Productivity Gains from AI.

- McKinsey & Company (2025). The State of AI 2025.

- MIT NANDA Study (2025). State of AI in Business 2025 Report.

- Network World (2026). Tech Layoffs Surpass 45,000 in Early 2026.

- Pepper Foster (2025). The Artificial Intelligence AI ROI Report.

- World Economic Forum (2025). The Future of Jobs Report 2025.

Company & News Sources

- CBS News (2026). AI Layoffs 2026: Amazon, Pinterest and More.

- The Conversation (2025). Tech Companies Are Blaming Massive Layoffs on AI — What’s Really Going On?

FAQs:

What are the biggest tech companies recently announcing AI-related layoffs?

The biggest names driving the AI layoffs 2026 wave include Amazon, which accounted for 52% of tech layoffs in Q1 2026 alone, alongside Block, Meta, Atlassian, Pinterest, Indeed, Glassdoor, and Salesforce. IBM and Microsoft made significant cuts in 2025. JobsPikr job posting data shows that several of these companies, including Amazon and Block, surged hiring in H2 2025 before their posting volumes collapsed in Q1 2026, suggesting strategic restructuring decisions rather than gradual AI-driven productivity gains.

What major tech companies are planning AI-related layoffs in 2026?

Beyond the cuts already announced, JobsPikr hiring velocity data identifies the early warning signals worth watching: companies whose total job posting volumes are declining consistently quarter on quarter, whose AI enablement hiring has not kept pace with their AI efficiency claims, and whose geographic posting mix is shifting toward lower-cost markets. The AI layoffs 2026 wave is not finished, but the next announcements are already visible in the job posting data before they reach the press.

What factors are driving job reductions in AI development departments?

Three factors are converging to drive AI layoffs 2026 across development departments specifically. First, the pivot from AI build to AI deploy: many companies that overhired ML engineers and AI researchers during the 2022–2024 model-building era are now consolidating into smaller teams using third-party foundation models rather than building proprietary ones. Second, investor pressure to demonstrate AI-driven cost efficiency is incentivising headcount reductions as a market signal, independent of actual productivity gains. Third, the concentration of AI development capacity inside a small number of hyperscalers is reducing the need for mid-size companies to maintain large in-house AI development functions.

What job sectors are projected to experience the most AI-driven layoffs by 2026?

JobsPikr job posting data identifies the sectors already showing the steepest structural declines heading into 2026. Customer service and support roles have seen posting volumes fall 24–28% over 18 months. Data entry and basic bookkeeping have declined 34% and 10% respectively across the same period. In the broader AI layoffs 2026 landscape, media and publishing is experiencing the sharpest content role contraction, down 34% year on year for copywriter postings, while telemarketing has declined 29%. Notably, B2B SaaS and financial services are moving in the opposite direction, with content and analytical roles growing as AI augments rather than replaces.

What industries outside of tech might hire AI talent after 2026 layoffs?

The industries absorbing displaced AI talent following the AI layoffs 2026 wave are those with large unmet demand for AI implementation at scale. Financial services leads, AI Governance and Responsible AI skills are growing fastest here, driven by regulatory requirements around AI deployment in lending, fraud detection, and customer decisioning. Healthcare is hiring aggressively for AI Implementation Specialists to manage clinical AI tools and compliance workflows. Manufacturing is building AI Enablement Manager capability to oversee predictive maintenance and supply chain optimisation systems. Retail and logistics are absorbing AI Project Managers as automation infrastructure investment accelerates. All four sectors are identifiable in JobsPikr’s training investment proxy data as active builders of AI readiness infrastructure.

How will AI layoffs in 2026 impact the software development job market?

The impact of AI layoffs 2026 on software development is more nuanced than the headlines suggest. Junior and entry-level developer roles, particularly those focused on routine code generation, QA testing, and basic front-end work, are facing the steepest structural pressure, as AI coding tools absorb a meaningful share of that output. Senior and specialist development roles are proving more resilient: AI systems require experienced engineers to architect, validate, and maintain them at production scale. The net effect is a compression of the junior pipeline and a salary premium increase for experienced developers, a pattern already visible in JobsPikr’s salary benchmarking data for AI-adjacent engineering roles, where compensation grew 8.5% year on year in Q1 2026.