- **TL;DR**

- Why Responsible Data is Becoming the New Differentiator for HR Tech Leaders

- Ready to Build Your Talent Intelligence on Responsible Data?

- How Ethical Data Collection and Ethical Scraping Build Better Labor Market Decisions

- Inside the JobsPikr Responsible Data Framework: From Ethical Scraping to Delivery

- Ready to Build Your Talent Intelligence on Responsible Data?

- How JobsPikr Keeps Data Integrity Strong Across Changing Job Markets

- Why Responsible Data and Data Ethics are now a Competitive Advantage

- Why JobsPikr is a Responsible Data Partner for Labor Market Intelligence

- Ready to Build Your Talent Intelligence on Responsible Data?

-

FAQs

- 1. What is responsible data in HR tech and labor market analytics?

- 2. What is ethical scraping, and why does it matter for job data?

- 3. What is data integrity, and how does it affect labor market data quality?

- 4. How can HR and analytics teams ensure ethical data collection when working with vendors?

- 5. Why is responsible data especially important for AI and predictive HR models?

**TL;DR**

Responsible data is no longer a “nice to have.” It is fast becoming the real differentiator between HR tech vendors that you can trust and those that quietly increase your risk. As AI, dashboards, and labor market analytics move closer to core decision-making, leaders are waking up to a simple truth: if the job data feeding your models comes from opaque, unethical scraping and weak data integrity practices, every insight built on top of it is fragile.

Research backs this up. Around 71% of consumers say they would stop doing business with a company if it mishandled their sensitive data, and 87% won’t work with organizations whose security practices they don’t trust. Meanwhile, the average global cost of a data breach has hit roughly USD 4.88 million.

This is why JobsPikr treats responsible data, ethical scraping, and data integrity as core product principles, not marketing slogans. From how job data is collected, to how it is normalized, governed, and delivered, JobsPikr’s approach is built around transparency, respect for source websites, and predictable, high-quality labor market data you can defend in any internal review.

If you are building HR analytics, AI models, or workforce planning tools, the real question is no longer “how much data can we get?” It is “how responsible is this data, and can we explain exactly how it was collected and processed?”

Why Responsible Data is Becoming the New Differentiator for HR Tech Leaders

Image Source: datamakestheworld

There was a time when “more data” automatically sounded like a good thing. More job postings, more resumes, more signals, more feeds. In that world, vendors bragged about scale and coverage, and nobody asked too many questions about how the data got there.

That world is disappearing.

Multiple studies now show that trust around data handling has become a hard business filter. Cisco’s research, for example, found that 76% of consumers would not buy from an organization they did not trust with their data, and 81% believe how an organization treats data reflects how it respects its customers. Those attitudes do not stop at consumer apps. They carry over into B2B buying, HR platforms, and analytics stacks as well.

For HR tech leaders, this means your choice of data partners has become part of your brand. If you are using job data sourced through aggressive, non-compliant scraping or unclear pipelines, you are inheriting that risk. You might not see it on day one, but it will show up the moment someone in legal, security, or procurement asks, “Where does this labor market data actually come from?”

What “responsible data” really means in a job data context

Responsible data is not just a privacy line in a policy document. It is a set of daily decisions about:

- Which sites are scraped and under what rules.

- What kind of job data is collected and what is deliberately left out.

- How that data is stored, secured, and governed.

- How clearly the vendor can explain their approach to your teams.

In the context of job data and labor market intelligence, responsible data ties together ethical scraping practices, clear data ethics, and strong data integrity controls. When those pieces line up, your analytics stack becomes not only more compliant but also more useful. You stop spending cycles cleaning up messy feeds and start spending your time on actual workforce decisions.

Why leaders are shifting from “more data” to “trusted data”

The shift is being pushed by both risk and opportunity.

On the risk side, the cost of getting data wrong is climbing. IBM’s latest numbers show that a single data breach now costs organizations an average of around USD 4.88 million, with much of that coming from business disruption and lost customers. When job data platforms are plugged into core HR systems, a weak link upstream can quickly turn into a security or compliance issue downstream.

On the opportunity side, surveys show that leaders see responsible data and AI ethics as a genuine differentiator. Over 75% of business leaders say AI ethics is important to their organization, and 75% believe ethics itself is a source of competitive differentiation. In practice, that means choosing vendors who can demonstrate not only performance but also governance.

The hidden risks of ignoring data ethics

Ignoring data ethics rarely blows up on day one. The problems are quieter and slower.

You might start with feeds that look rich on paper but are full of duplicates, broken locations, scraped noise, and postings taken from places that have not consented to that kind of reuse. Over time, this undermines your data integrity and produces insights that feel “off” to stakeholders. When people stop trusting the dashboard, they go back to gut decisions.

You also open yourself up to questions from regulators, customers, and candidates. If they discover that your shiny AI-powered talent platform is built on top of job data collected in ways that ignore robots.txt signals or website terms, the conversation moves from innovation to risk very quickly.

Ready to Build Your Talent Intelligence on Responsible Data?

Your decisions are only as strong as the data behind them. Give your team job market insights they can trust.

How Ethical Data Collection and Ethical Scraping Build Better Labor Market Decisions

A lot of people treat “scraping” like a single, undifferentiated activity. In reality, there is a big gap between ethical scraping and the kind of scraping that quietly breaks rules or ignores the rights of sources and users.

Image Source: PromptCloud

Ethical scraping vs traditional scraping: what’s the difference?

Traditional scraping often chases volume at any cost. It ignores robots.txt, overloads sites with aggressive request patterns, and collects more data than is genuinely needed, including personal or sensitive details that should never end up in an analytics feed.

Ethical scraping, by contrast, is designed around respect and constraint. It pays attention to robots.txt and site terms. It focuses on job data fields that are necessary for analysis instead of hoovering up everything on the page. It avoids sites or formats that clearly do not wish to be harvested. And it uses technical controls to keep the load on source websites reasonable.

The result is not only better data ethics but also better data integrity. Ethical scraping practices reduce noise, lower the risk of takedown requests, and make sure your labor market data remains stable over time instead of breaking every time a site layout changes.

Why transparency is central to ethical data collection

Ethical data collection is not only about what you scrape; it is also about how openly you talk about it.

For responsible data partners, transparency means being able to explain:

- Which categories of sites are included in your coverage.

- How source lists are maintained and cleaned.

- What logic governs when a site is crawled and when it is skipped.

This matters because your internal governance teams will ask for it. Security and compliance teams are increasingly involved in vendor choices, and they are not satisfied with “we follow best practices” as an answer. They need evidence of those practices.

JobsPikr’s approach is to treat transparency as part of the product. Clear documentation, consistent schemas, and open communication about how job data is collected make it easier for your teams to sign off and move forward.

How to ensure data integrity when you work with large-scale job data

Once ethical collection is in place, the next challenge is data integrity. When you are ingesting job postings at scale, cracks can appear quickly: duplicate postings, outdated roles, missing locations, or inconsistent job titles.

So, how do you ensure data integrity in a real-world labor market dataset?

What is data integrity in the context of job data?

Data integrity is about more than correctness in a single row. It is the ongoing trust that your job data behaves the way you expect every time you query it. In practical terms, data integrity for job data means:

- Job titles follow consistent patterns instead of dozens of random variants.

- Locations can be reliably mapped to cities, regions, and countries for analysis.

- Dates actually reflect when a posting was live, not when it was first seen months ago.

- Each job posting is represented once, not five times.

When this kind of integrity is present, your talent analytics and labor market dashboards stop feeling fragile. Workforce planners and HR leaders can ask hard questions about skills, geo-spread, and hiring velocity, and trust the answers they get back.

The role of validation, deduplication, and normalization

Ensuring data integrity is not a one-time project. It is a daily discipline.

Validation checks catch obvious errors, such as impossible dates or missing fields. Deduplication logic identifies postings that appear across multiple job boards and consolidates them into a single canonical record. Normalization processes convert job titles, company names, and location formats into a consistent language that your analytics tools can understand.

JobsPikr bakes these steps into the pipeline instead of treating them as optional add-ons. That means the “raw” job data you receive has already been through data integrity checks, so your team does not waste cycles cleaning the same problems again and again.

Benchmark Your Data’s Integrity in Minutes

Inside the JobsPikr Responsible Data Framework: From Ethical Scraping to Delivery

JobsPikr’s stance is simple: if the data is not responsible, it is not useful. So the entire framework is built around ethical scraping, clear data ethics, and predictable quality.

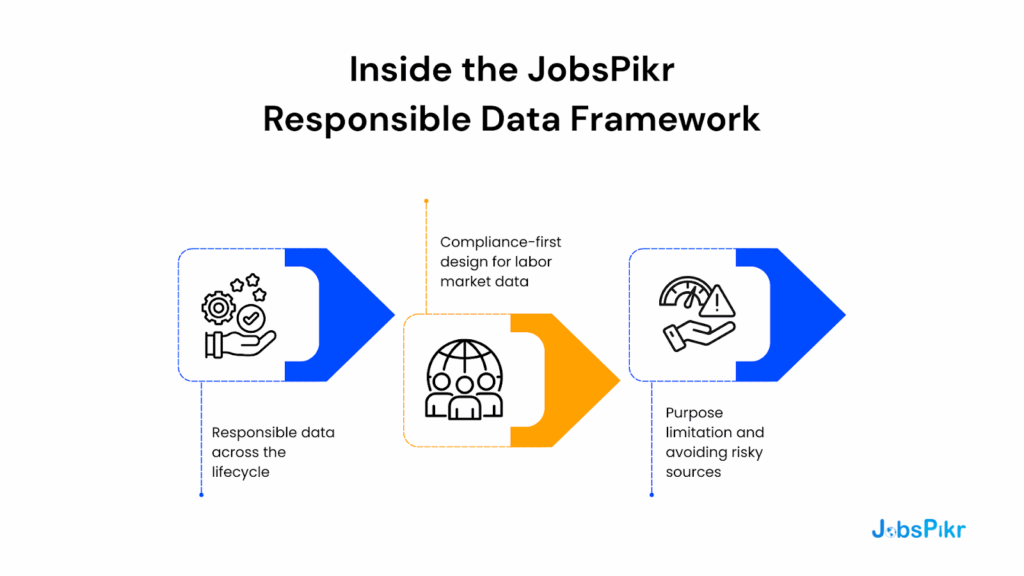

Responsible data across the lifecycle

From the moment a potential source is considered, JobsPikr evaluates it against a responsible data checklist. That includes whether the website allows automated access, whether job data is clearly intended for public visibility, and whether scraping would create undue load on the site.

Collection processes are tuned for respect and restraint. Instead of hammering every page as fast as possible, JobsPikr uses throttling, scheduling, and monitoring to keep requests within acceptable boundaries. That directly supports long-term relationships with the ecosystem instead of short-term extraction.

Once collected, job data passes through normalization and integrity layers before it ever reaches customers. This is where data ethics and data integrity intersect: sensitive fields that are not needed for labor market analysis are avoided, and the remaining fields are standardized and cleaned.

Compliance-first design for labor market data

Regulators are increasingly focused on how AI and data are governed. Surveys show that although 79% of executives say AI ethics is important, less than a quarter have fully operationalized governance frameworks. JobsPikr’s product is designed to help you land on the right side of that gap.

By providing structured, well-documented job data that respects source constraints, JobsPikr makes it easier for your teams to demonstrate due diligence. When auditors or internal stakeholders ask where your job data comes from and how it is handled, you can point to a clear, documented framework instead of vague assurances.

Purpose limitation and avoiding risky sources

A core principle in data ethics is purpose limitation: collecting only what is needed for the stated purpose. For labor market data, that means focusing on fields that support skills analysis, hiring trends, compensation signals (when available), and geo-spread. It does not require scraping every personal detail on the page.

JobsPikr leans into this by actively avoiding risky sources and fields that do not align with labor market analytics use cases. That keeps your downstream models and reports focused on the job data that matters instead of dragging in unnecessary risk.

Ready to Build Your Talent Intelligence on Responsible Data?

Your decisions are only as strong as the data behind them. Give your team job market insights they can trust.

How JobsPikr Keeps Data Integrity Strong Across Changing Job Markets

Labor market data changes fast. Titles evolve, skills appear out of nowhere, and companies rewrite job descriptions to reflect new priorities. A responsible data provider has to keep up with that pace without sacrificing integrity.

Schema discipline and versioning

JobsPikr uses disciplined schemas for job data fields so that your analytics do not break every time a new skill shows up or a job board tweaks its layout. When schema changes are required, they are versioned and documented, so your teams know exactly what has changed and when.

This is especially important when you are training AI models on job data. If the underlying structure shifts silently, your model performance can degrade without any obvious external signal. Schema discipline is a quiet but powerful part of responsible data.

Deduplication, noise reduction, and timestamp accuracy

Responsible data also means saying “no” to junk. JobsPikr applies deduplication to avoid counting the same job multiple times just because it appears across several job boards. It filters out stale postings that have long since expired. It tracks when a posting was first seen and when it disappeared, giving you more accurate time-series analyses for hiring velocity and demand.

These details may sound small, but they are exactly the kind of things that separate “looks big on a slide” datasets from genuinely reliable labor market data.

Benchmark Your Data’s Integrity in Minutes

Why Responsible Data and Data Ethics are now a Competitive Advantage

There is a temptation to treat data ethics as pure risk management. Something to worry about, so you do not end up in a headline. But the numbers suggest a more positive story.

Recent surveys show that 71% of consumers would stop doing business with a company if it mishandled their data, while 94% of organizations say their customers will not buy from them if they do not protect it properly. That means responsible data practices do not just avoid loss; they also open the door to growth and loyalty.

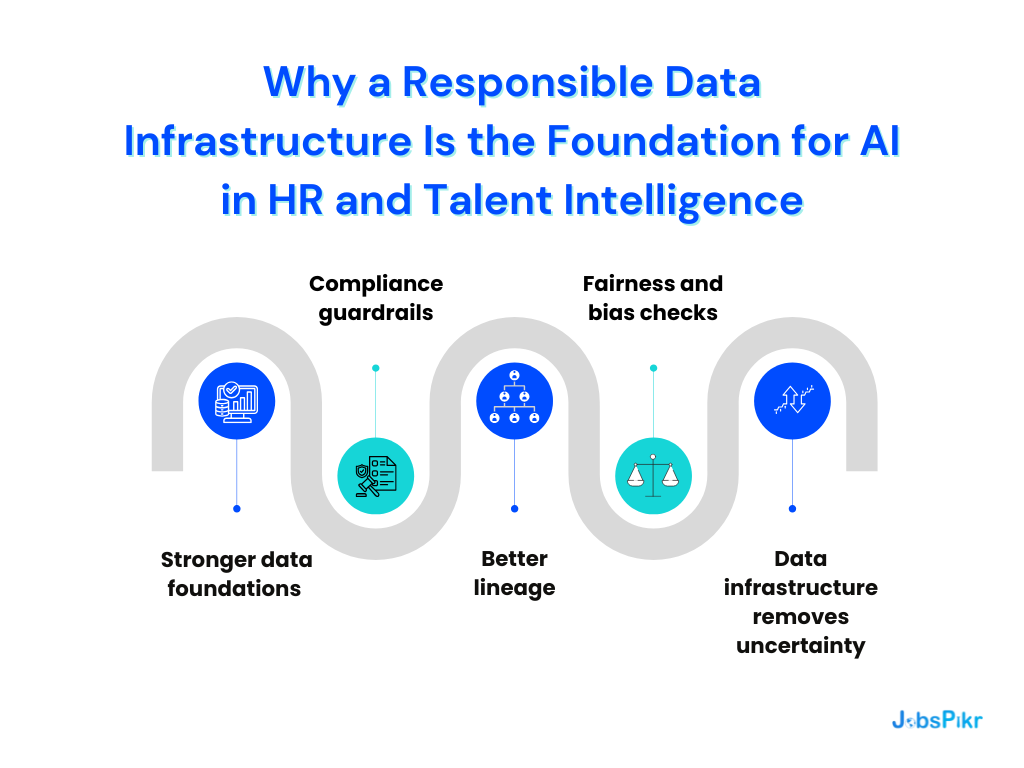

For HR tech and analytics teams, responsible data has three clear advantages.

First, it strengthens internal trust. When your CHRO, CIO, and CFO see that your job data partner has a clear, ethical scraping and governance framework, they are far more likely to support ambitious projects built on top of that data.

Second, it improves model performance. Clean, well-governed job data with strong data integrity simply performs better in AI models than noisy, ethically questionable collections. That translates into better predictions, more accurate demand signals, and fairer recommendations.

Third, it becomes part of your story to the market. When your customers or employees ask how their data and external signals are handled, being able to talk about responsible data practices is no longer optional. It is part of your value proposition.

Why JobsPikr is a Responsible Data Partner for Labor Market Intelligence

JobsPikr’s entire product philosophy is anchored in responsible data. Ethical scraping is treated as a technical design constraint, not a marketing line. Data integrity checks are built into the pipeline, not left to customers. Documentation about sources, schemas, and usage is created so your teams can make informed decisions, not kept behind the curtain.

For HR tech leaders and analytics teams, that means you can focus on the questions that matter:

- Which skills are rising in specific markets?

- How is demand shifting for key roles?

- Which locations are heating up or cooling down for hiring?

You do not have to constantly second-guess whether the underlying job data is compliant, ethical, or stable. JobsPikr’s responsible data framework is designed so you can build confidently on top of it.

Ready to Build Your Talent Intelligence on Responsible Data?

Your decisions are only as strong as the data behind them. Give your team job market insights they can trust.

FAQs

1. What is responsible data in HR tech and labor market analytics?

When people talk about “responsible data,” they’re really talking about whether you’d feel comfortable explaining your data choices in a room full of lawyers, candidates, and employees. In HR tech and labor market analytics, responsible data means you know exactly where your job data comes from, why you’re using it, and what lines you refuse to cross.

It covers three basic things. First, the way you collect job data: are you pulling it from places that are clearly meant to be public and following the rules those sites set? Second, how you process it: are you stripping out anything sensitive you don’t need, and keeping your data integrity high so the numbers reflect reality? Third, how you use it: are your insights and models aligned with clear, fair goals rather than “collect everything and see what happens”?

If your team can answer those questions honestly, document the answers, and defend them in front of stakeholders, you are a lot closer to working with responsible data than most organizations in the market.

2. What is ethical scraping, and why does it matter for job data?

Ethical scraping is what happens when you treat other people’s websites like partners in an ecosystem, not targets to extract from. In practice, that means you respect robots.txt rules, avoid scraping around barriers that were clearly put there for a reason, and focus on fields that make sense for labor market data instead of copying every detail from a page.

When it comes to job data, this is not just a “nice to have.” Your job boards, company career sites, and aggregators are the foundation of the hiring economy. If your vendor scrapes them aggressively, ignores their guidelines, or captures information that goes beyond what’s needed, they aren’t just creating a data ethics problem, they’re creating a business risk for you.

Ethical scraping gives you something very simple but very valuable: predictable, stable job data. Fewer takedown issues, fewer broken feeds, and a much stronger story when someone inside your company asks, “Are we comfortable building decisions on top of this?”

3. What is data integrity, and how does it affect labor market data quality?

Data integrity is a fancy way of asking, “Can we trust this dataset every single time we touch it?” For labor market data, it shows up in very practical ways. Are job titles standardized, or do you have twenty versions of the same role? Can you actually filter by city or region without half the locations breaking? Do jobs appear once, or are you counting the same vacancy three times because it appears on three boards?

When data integrity is weak, every meeting turns into an argument about the numbers. Someone will say, “That can’t be right,” and they are often correct. Duplicates, stale postings, and inconsistent formats quietly distort your view of job demand, skills trends, and hiring velocity. That’s how “bad” labor market data poisons otherwise good analysis.

Strong data integrity does the opposite. It makes your dashboards boring in the best way. People stop debating whether they can trust the job data and start debating what to do with it. In the context of responsible data, integrity is the part that turns ethical data collection into insights you can act on.

4. How can HR and analytics teams ensure ethical data collection when working with vendors?

The simplest answer is: stop treating data ethics as an afterthought in procurement. Put it right at the top of your checklist. When you evaluate a job data or labor market intelligence vendor, do not just ask about coverage and features; ask how they collect, filter, and store that data.

A practical approach is to have a short, non-negotiable set of questions. For example: Which sources do you use and why? How do you handle robots.txt and website terms? What kind of data do you deliberately avoid collecting? What controls are in place to maintain data integrity over time? Then share the answers with your security, legal, and privacy teams instead of keeping the conversation inside HR alone.

Good vendors will have thought about ethical scraping and ethical data collection long before you ask. They will have documentation, examples, and a clear explanation of their data ethics. If a vendor hesitates, changes the subject, or only offers vague language like “industry best practices,” that is usually your sign to be cautious.

5. Why is responsible data especially important for AI and predictive HR models?

AI models are very good at amplifying whatever you feed them. If the labor market data going in is biased, messy, or collected in ways that would make your legal team uncomfortable, the model will happily bake those problems into every score, forecast, and recommendation it produces. You might not see the damage on day one, but it will show up in skewed shortlists, odd salary signals, or risky decisions that are hard to justify.

Responsible data gives your AI a more solid foundation. If your job data comes from ethical scraping, is cleaned with strong data integrity checks, and sits inside a clear data ethics framework, you can be more confident that the patterns your models find reflect the real world. It also becomes much easier to explain your AI to stakeholders. When someone asks, “Where did this prediction come from?” you are not stuck saying, “From a black box.”

In short, responsible data is not separate from AI performance. It is one of the main reasons your AI earns trust instead of suspicion. When predictive models are powered by well-governed job data and transparent collection practices, they stop being experiments and start becoming credible tools for workforce decisions.