- **TL;DR**

- What makes labor data “AI-ready” instead of just another messy feed?

- Step 1: How to design a labor data ingestion architecture that does not fall apart at scale

- See how AI-ready labor data works

- Step 2: How to normalize labor market data so AI models stop tripping over inconsistencies

- Step 3: How enrichment transforms basic labor data into an intelligence layer

- Step 4: How to architect a labor data warehouse that supports long-term AI workloads

- See how AI-ready labor data works

- Step 5: How to score, label, and structure labor data so AI models can actually learn from it

- Step 6: How to build a data governance and quality pipeline for labor data

- See how AI-ready labor data works

- Step 7: How to deliver an AI-ready labor market dataset to teams that need real-time insights

-

Real-world examples: What AI-ready labor data unlocks for HR and workforce teams

- Skills intelligence that tells you what roles actually require today

- Understanding talent shortages and demand patterns across regions

- Pay transparency and compensation benchmarking rooted in real data

- Competitive hiring intelligence without guesswork

- Workforce forecasting that feels grounded instead of speculative

- Building a scalable data infrastructure blueprint for labor market data (Summary)

- See how AI-ready labor data works

-

FAQs:

- 1. What is labor data and why does it matter for AI models?

- 2. How is an AI-ready labor market dataset different from a collection of job postings?

- 3. Why is normalization so important in labor market data software?

- 4. What makes a labor dataset truly AI-ready instead of just cleaned up?

- 5. How can companies use an AI-ready labor market dataset to improve planning and decision-making?

**TL;DR**

If you work with job postings, salary ranges, or workforce reports, you already live in labor data every day. The problem is not getting data. The problem is getting labor data into a shape where your models and dashboards can trust it.

In the real world, the same role shows up as “Sr. SWE,” “Senior Software Engineer,” and “Backend Rockstar.” Locations jump between full addresses, vague “remote,” or half-filled cities. Salary fields are missing in one market and structured in another. On top of that, feeds change quietly, and your downstream systems break without warning.

This guide walks through what an AI-ready labor market dataset actually looks like under the hood. We will go step by step: how labor data is ingested, normalized, enriched, stored, labeled, and finally exposed as something your AI and analytics teams can plug into without babysitting it every week.

If you are comparing labor market data tools or debating whether to build your own stack, think of this as the architecture sketch you keep open in the next tab.

What makes labor data “AI-ready” instead of just another messy feed?

Let’s start with something everyone working with labor data eventually realizes: collecting data is the easy part. Making it usable is the real work. AI-ready labor data is not a fancy label. It is the point where the dataset stops fighting you and starts supporting the decisions and models you want to build.

Below are the three characteristics that separate AI-ready labor data from a raw, unpredictable feed.

AI-ready labor data has a consistent structure across every field

When labor market data comes in, it arrives in dozens of shapes. Job titles follow different naming styles. Skills appear in free text, nested lists, or not at all. Locations range from full street addresses to vague mentions like “Remote Europe.” Without a shared structure, your model sees every variation as a new pattern instead of a part of the same concept.

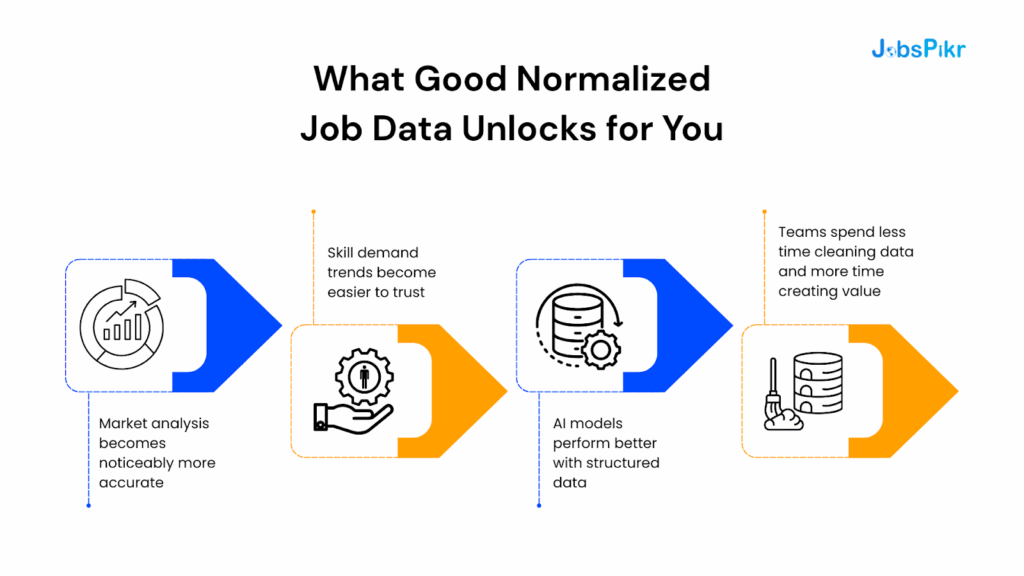

Once you normalize these fields, patterns that were scattered across formats start to line up. “Sr. SWE,” “Senior Software Engineer,” and “Software Engineer III” stop behaving like unrelated roles. Location-based insights stop drifting because everything maps to the same standard geography. This is where labor data stops being noisy and starts being learnable.

AI-ready labor data is complete enough for real decision-making

Completeness does not mean filling every blank cell. It means you have the essential fields your AI and analytics workflows rely on. For HR tech, workforce planning, and compensation benchmarking, this usually includes standardized job titles, skills, seniority levels, industries, and compensation indicators when available.

Public sources like the Bureau of Labor Statistics release wage data on a regular schedule, and layering these signals onto job postings fills gaps that raw datasets cannot cover. When completeness is managed at the field level, models can generalize instead of overfitting to whatever fragmented data happens to be present.

AI-ready labor data keeps its shape as the market changes

Labor data is dynamic. New job titles appear. Emerging skills go mainstream. Salary ranges change. Platforms update their posting structures. If your dataset breaks every time one of these shifts happens, it is not AI-ready yet.

A stable labor data architecture adapts without creating downstream chaos. That usually means versioned schemas, reliable validation, and clear rules for introducing new fields or value formats. When the structure is durable, your models have a consistent foundation instead of constantly retraining on moving targets.

Download the AI Readiness Index Report

Step 1: How to design a labor data ingestion architecture that does not fall apart at scale

Before anything can be normalized, enriched, or prepared for AI, you need a reliable way to bring labor data into your system. This sounds simple until you remember how many sources labor market data actually comes from. Job boards. ATS exports. Government datasets. Career sites. Aggregators. Niche communities. Compensation surveys. Every source speaks its own language, and every source updates on its own schedule.

A strong ingestion layer solves that problem. It turns scattered labor data sources into a steady, predictable flow your downstream pipelines can trust.

Why continuous ingestion matters for modern labor market data

Labor data changes constantly. One company may update its postings daily. Another might refresh listings only when roles close. Government sources like the Bureau of Labor Statistics publish new labor data monthly or quarterly. Skills trends shift weekly. New layouts appear on career sites without warning.

If your ingestion architecture only collects data in big, inconsistent batches, you lose the signal that makes labor data valuable: change over time.

Continuous ingestion captures the day-to-day churn. It records posting updates, additions, rewrites, and closures as they happen. Instead of a static snapshot, you build a living dataset that reflects real hiring movement. This is the foundation for trend detection, forecasting, salary modeling, and competitive hiring analysis.

Why tracking changes at the posting level is non-negotiable

A job posting is not static. Titles change. Salary ranges appear later. Skill lists expand as hiring managers refine their expectations. Sometimes a role switches locations or moves from on-site to hybrid with no announcement.

If your system only stores the “latest” version, you lose the ability to answer questions like:

- Did the salary increase after the role sat open for a month?

- Did the company shift the required skills midway through hiring?

- Did hiring slow down after the job description changed?

This kind of historical visibility is why AI-ready labor data depends on version tracking. You need snapshots. You need diffs. You need the historical trails that models rely on when learning patterns.

The ingestion methods that support a stable labor data pipeline

Most organizations end up using a mix of methods. APIs give you structured, reliable feeds. Government datasets provide standardized labor market indicators. Direct site integrations or scraping layers pick up the signals that data providers don’t always cover.

What matters most is not the method itself but the architecture behind it. You need:

- A scheduler that knows when each source updates

- A retry system for sources that fail intermittently

- A way to capture structural changes (new fields, broken layouts, schema shifts)

- A monitoring layer that catches missing or stale data before it spreads downstream

This is the difference between collecting data and running an actual labor data infrastructure.

See how AI-ready labor data works

Get a closer look at how JobsPikr collects, enriches, and structures global labor data so your AI and analytics teams don’t have to build the pipeline from scratch.

Step 2: How to normalize labor market data so AI models stop tripping over inconsistencies

Once you have labor data coming in reliably, the next challenge is making it usable. Raw labor market data is full of contradictions. Two companies describe the same job in completely different ways. Locations are inconsistent. Skills vary in spelling, abbreviations, and formatting. Even industry labels jump across taxonomies.

Normalization is the step where all of that noise is brought into a common structure so your models can learn from patterns instead of being overwhelmed by variation.

Why job title normalization is the backbone of any labor market dataset

Titles are the starting point for most labor data analysis. The trouble is that job titles rarely arrive in a clean, standard form. One company says “Sr. SWE,” another says “Senior Software Engineer,” a third uses “Software Engineer III,” and a start-up might invent something like “Backend Rockstar.”

If you feed these four titles into a model without standardizing them, the model treats them as four different roles. You end up with scattered clusters, unreliable skill mappings, and weak salary predictions.

Title normalization solves that by mapping messy inputs into a controlled vocabulary — often aligned with structures like SOC codes (in the US) or NOC codes (in Canada). Instead of dozens of variants, your dataset recognizes one canonical title, with all relationships tied back to it. That single step improves model performance more than almost anything else you do downstream.

Why location normalization matters for geographic labor insights

Locations are just as messy as job titles. Postings may include a full address, a partial city, a vague region like “EMEA,” or simply “Remote.” Some companies include ZIP codes; others leave them out. For global datasets, you also deal with multiple naming conventions, language variations, and administrative boundaries.

To make geographic labor data accurate, you need:

- City and region mapping

- Metro-level alignment (for example, BLS uses metropolitan statistical areas in the US)

- Country-level standardization

- Remote location classification that doesn’t break analysis

Once normalized, you unlock insights that simply don’t appear in raw labor data: wage differences across metros, talent hotspots, cross-border hiring patterns, and regional skill distributions.

Why industry, employer, and skill normalization complete the picture

Skills show up in every format imaginable. Some companies use comma-separated lists. Others bury skills in a long job description paragraph. Employers appear with different legal names, subsidiary labels, or brand variations. Industries shift depending on whether you use NAICS, ISIC, or proprietary categories.

If these fields stay messy, your downstream analytics stay messy. Normalization pulls them into a stable schema.

This is the moment where labor data stops being text and starts becoming a structured asset suitable for AI.

Step 3: How enrichment transforms basic labor data into an intelligence layer

Normalization gets labor data into a consistent shape, but enrichment is what gives it depth. Raw postings alone rarely contain the full context needed for analytics or AI models. Enrichment fills those gaps by adding structured skill data, compensation indicators, employer metadata, and other layers that turn a simple dataset into a decision-making engine.

Think of enrichment as everything you add after cleaning the data but before storing or modeling it. It’s where raw labor market data becomes something your product team, data scientists, and customers can actually work with.

Skills extraction: the core of any AI-ready labor data pipeline

Skills are the glue connecting job titles, industries, and compensation. Yet most postings bury skills inside long paragraphs with unpredictable formatting. Some companies list specific tools; others emphasize competencies. Some describe responsibilities instead of required skills.

AI-ready labor data solves this with structured skills extraction.

Here’s why it matters:

- It makes roles comparable across companies

- It helps models cluster similar jobs more accurately

- It improves salary predictions when combined with normalized titles

- It highlights emerging skills and hiring trends

Organizations like OECD have noted rising skill mismatches across industries, which makes this kind of structured skill intelligence even more important when forecasting demand or understanding workforce gaps.

Once extracted and standardized, skills become one of the most valuable enrichment layers in the entire labor market dataset.

Compensation signals: one of the hardest but most important enrichment layers

Not all postings include compensation. In many countries, fewer than half of job listings disclose salary ranges. However, public sources like the Bureau of Labor Statistics publish wage data by occupation and region. When you link postings to these external benchmarks, you can fill compensation gaps in a transparent and defensible way.

This form of enrichment matters because:

- It improves models predicting salary ranges

- It strengthens job matching algorithms

- It gives HR teams more realistic compensation baselines

- It exposes geographic pay variations

Even when exact salary numbers are missing, enriched compensation indicators anchor the dataset in real, validated wage patterns.

Employer metadata: understanding the company behind the posting

The same company can appear under multiple names across job boards, career sites, and aggregators. Without enrichment, your data treats “Google,” “Google Inc,” “Google LLC,” and “Alphabet” as separate employers.

Employer-level enrichment fixes that by:

- Unifying all brand and legal variations

- Linking employers to industry codes

- Adding company size, HQ location, or relevant identifiers

This means when you analyze hiring patterns or build competitive intelligence, your dataset reflects reality instead of a fractured version of it.

Why enrichment is where raw labor data becomes strategic

You can’t extract workforce trends, skill gaps, geographic differences, or salary insights without enrichment. You also can’t train reliable AI models because they need structured attributes, not unprocessed text.

Enrichment turns the dataset from a collection of job postings into a real labor market intelligence layer — something accurate enough for forecasting, benchmarking, compensation modeling, and large-scale workforce planning.

Download the AI Readiness Index Report

Step 4: How to architect a labor data warehouse that supports long-term AI workloads

Once labor data is enriched, you need a place where it can live, scale, and grow without collapsing under its own weight. This is where the warehouse layer comes in. A labor market dataset is not static. It expands every day, picks up new fields, and accumulates history. If your warehouse can’t support that evolution, everything built on top of it becomes fragile.

A well-designed labor data warehouse does two jobs at the same time:

it stores the raw and enriched data safely, and it keeps the structure predictable enough for AI, analytics, and downstream tools to run without breaking every month.

Why partitioning labor data by country, industry, time, and role family matters

Labor data grows fast because hiring activity never stops. If you store everything in one giant table, queries slow down, pipelines lag, and training jobs take longer than they should.

Partitioning solves this by breaking the dataset into meaningful slices.

The most effective partitions for labor market data usually include:

- Country or region, since labor laws, job titles, and compensation norms vary widely

- Industry or sector, which aligns with classification standards like NAICS or ISIC

- Time period (commonly month or week), which supports trend analysis and snapshot queries

- Role family or occupation category, which helps with fast retrieval and training subsets

Partitioning makes your warehouse more predictable. You know which partitions to query for a specific analysis and which ones to ignore. Most importantly, partitioning makes time-series work practical. Analysts can look at hiring spikes, wage changes, or skill adoption patterns across years without running full-table scans.

Schema design principles that make labor market datasets AI-ready

Designing a schema for labor data is not about squeezing everything into one table. It’s about separating concerns so each table stays stable even as your pipeline evolves.

A good labor data schema typically includes:

- A job posting fact table with normalized and enriched fields

- Dimension tables for titles, skills, industries, employers, and locations

- Historical tables (also called slowly changing dimensions) for capturing changes over time

- Metadata tables for tracking ingestion source, version, and freshness

This structure creates a foundation AI models can trust. The fields don’t shift around unexpectedly, and new attributes don’t disrupt older analyses. When the schema has clear boundaries, upstream teams can add features without breaking downstream consumers.

Handling duplicates, stale posts, and schema drift

Labor data is notorious for three operational problems: duplicates, stale listings, and silent schema changes.

Duplicates appear when a job is reposted across different sites, when an employer refreshes a listing, or when external sources syndicate roles. If your warehouse does not deduplicate postings using identifiers or fuzzy matching, your analytics inflate hiring activity and distort trends.

Stale posts happen when roles stay online long after they are filled. Many job boards do not remove expired postings immediately. Without logic to flag or remove stale data, your time-series analysis becomes misleading. For example, the Bureau of Labor Statistics warns that older postings often carry outdated wage or skill signals, which skew occupational estimates if kept in circulation.

Schema drift is the quiet problem. Websites update layouts, rename fields, or change formats without announcement. If your warehouse ingests these changes blindly, older records and newer ones stop lining up.

A resilient labor data warehouse has defenses against all three. It checks for duplicates before storing records. It flags stale listings based on posting age or update frequency. And it validates schema structure so unexpected changes don’t break pipelines.

Why the warehouse layer determines how far your AI can go

If the warehouse is shaky, everything downstream becomes reactive: rushed hotfixes, retraining cycles, mismatched fields, broken dashboards, and inconsistent reporting. But if the warehouse is stable, AI workloads get predictable access to clean, well-organized labor data.

This is how teams move from experimental AI to production-grade intelligence.

The warehouse is the layer that makes long-term labor market modeling possible.

See how AI-ready labor data works

Get a closer look at how JobsPikr collects, enriches, and structures global labor data so your AI and analytics teams don’t have to build the pipeline from scratch.

Step 5: How to score, label, and structure labor data so AI models can actually learn from it

By the time labor data reaches this stage, you have a clean, enriched, and well-structured pipeline. But AI models need something more than tidy data. They need labels, categories, and signals that help them understand what each record represents. This step is where raw observations become training-ready features.

Scoring and labeling bring consistency to how your models interpret job titles, skills, seniority levels, compensation patterns, and hiring behaviors. Without this layer, AI ends up guessing far more than it should, which weakens forecasting, clustering, recommendation engines, and matching algorithms.

Classification models: bringing structure to roles, skills, and seniority

A predictable labor dataset assigns each record a structured identity. For most organizations, that means using classification models to tag the following:

- Role categories that map each job to a standardized occupation group

- Seniority levels based on phrasing, experience requirements, and title patterns

- Skill clusters extracted from the posting text and aligned to a known taxonomy

- Industry alignment based on employer metadata and NAICS or ISIC codes

This is not just labeling for the sake of order. Models train better when each posting sits in a well-defined category. It reduces ambiguity and creates a stable foundation for downstream predictions.

Public bodies like the Bureau of Labor Statistics rely on similar occupational groupings for workforce projections. The reason is simple: consistent categories make long-term labor trends measurable.

Why historical snapshots dramatically improve forecasting accuracy

Most organizations store only the most recent version of a job posting. That approach throws away valuable information. Every time a posting changes — salary added, skills rewritten, title modified — it reflects a shift in employer strategy or labor market pressure.

Capturing historical snapshots gives you:

- A timeline of how roles evolve

- Signals that correlate with hiring urgency

- The ability to analyze when and why postings change

- Insight into skill drift within occupations

- Better prediction accuracy for fill times and salary trends

Forecasting models trained on static data tend to flatten out important movements. Models trained on versioned historical data learn how the labor market behaves in real time.

Scoring labor data with intent, demand, and competitiveness signals

Scoring layers add nuance. They allow models to understand not just what a posting is, but what it represents.

Useful score types include:

- Demand scores, showing how competitive a role is in a specific region or industry

- Skill intensity scores, reflecting how specialized the job requirements are

- Compensation alignment scores, comparing posted ranges with benchmark wages

- Persistence scores, indicating how long roles stay open before being filled or withdrawn

These scores help AI algorithms distinguish patterns that are invisible in raw data. For example, skill intensity may correlate with longer hiring times. Compensation misalignment might signal why a role remains unfilled. Demand scoring helps highlight talent shortages across locations.

Why structured and scored labor data becomes the foundation of every AI use case

Once labor data is labeled, classified, versioned, and scored, it becomes dramatically more useful. You can feed it into:

- Recommendation engines for job matching

- Compensation models for salary benchmarking

- Forecasting pipelines for demand and fill-time predictions

- Competitive hiring analysis and workforce planning tools

- Skills intelligence platforms that need clean relational data

Without structured labels and scoring, these systems rely on generic embeddings and inconsistent text patterns, which limit their precision.

Scoring and labeling are the steps that turn a dataset into something AI can genuinely learn from — rather than something your team constantly has to correct.

Download the AI Readiness Index Report

Step 6: How to build a data governance and quality pipeline for labor data

Once scoring and labeling are in place, the next challenge is keeping the dataset healthy. Labor market data ages quickly, sources change without notice, and new fields get introduced all the time. Without strong data governance and a reliable quality pipeline, even the best-designed architecture will drift into chaos.

AI-ready labor data is not just about how it is built. It is about how it is maintained. Governance ensures that your dataset stays trustworthy, versioned, and stable, even as the market shifts around it.

Automated quality checks: the first line of defense against bad labor data

Quality issues in labor market data rarely show up dramatically. They creep in slowly. A source stops updating for a week. A job board changes the way it reports locations. A new field appears unexpectedly. Without automated checks, these problems spread across downstream systems before anyone notices.

An effective quality pipeline monitor:

- Freshness, so you know when a source has gone stale

- Schema consistency, so structural changes don’t silently break ingestion

- Field-level completeness, so critical attributes like title, location, or skills are never missing

- Duplicate patterns, especially from syndication feeds that copy roles across multiple sites

- Location accuracy, since geographic fields are among the most error-prone

Automated checks catch these issues early, ideally before data enters the warehouse. This is where most organizations prevent expensive cleanup later.

Human-in-the-loop review: why governance cannot be fully automated

Labor data is constantly evolving. New job titles appear. Skills shift. Companies rebrand. Sectors reorganize. AI can detect anomalies, but it cannot always interpret them in context.

That is why human review remains essential. Governance teams (or vendor data teams if you use a labor market data tool) periodically audit:

- Outlier salary patterns

- Unexpected title variations

- Emerging skills that need taxonomy updates

- Employer name changes or mergers

- Geographic anomalies caused by formatting inconsistencies

A blended approach — automation for detection, humans for interpretation — keeps the system grounded in real-world knowledge instead of rigid rules.

Version control for schemas, taxonomies, and mappings

As labor data grows, so do your internal standards. You add new fields. Update taxonomies. Expand skill mappings. Improve employer normalization logic.

Without version control, these changes become a moving target.

Versioning gives you:

- A clear timeline of how the dataset evolved

- Reproducibility for models trained on older data

- The ability to compare outputs before and after a change

- Traceability for debugging downstream inconsistencies

This stability is especially important for enterprise customers who rely on audit trails. It is also why most mature labor market datasets maintain formal release notes, similar to software.

Why governance is the difference between “data you trust” and “data you constantly fix”

A dataset with strong governance feels different to work with. Downstream systems stop breaking. Schema changes stop surprising people. Your team spends less time patching pipelines and more time building.

Most importantly, the data’s reputation improves. It becomes something product teams can build on, data scientists can model confidently, and AI systems can consume without fragile workarounds.

Governance is not a nice-to-have. It is the operational backbone behind any AI-ready labor market dataset.

See how AI-ready labor data works

Get a closer look at how JobsPikr collects, enriches, and structures global labor data so your AI and analytics teams don’t have to build the pipeline from scratch.

Step 7: How to deliver an AI-ready labor market dataset to teams that need real-time insights

Once the data is governed, enriched, and structured for AI, the final step is getting it into the hands of the people who need it. This is where a lot of labor data projects fall apart. The backend is strong, but the delivery layer is clunky, inconsistent, or missing key formats.

An AI-ready labor market dataset must be easy to consume, whether you are an engineer building a model, a product manager designing a feature, or an HR leader exploring hiring trends.

Delivery is not just a technical step. It is the moment when the value of the data becomes visible.

How HR tech platforms typically consume AI-ready labor data

HR technology platforms tend to rely on structured feeds that fit directly into their internal systems. They need predictable schemas, stable identifiers, and a historical context they can layer into their own analytics.

A well-delivered labor data feed supports:

- Job matching and recommendation engines

- Skills intelligence modules

- Compensation benchmarking tools

- Workforce planning dashboards

- Talent demand and supply analysis

Because labor data changes quickly, HR platforms need frequent updates without schema surprises. The delivery layer must behave like a dependable service, not a one-off export.

How compensation teams use AI-ready labor data for benchmarking

Compensation teams care deeply about consistency. They want standardized titles, validated skills, and geographically aligned wage signals. They also need reliable historical context.

For example, the US Bureau of Labor Statistics releases occupational wage data annually, which becomes a benchmark when combined with real-time postings from the market. When these pieces come together cleanly, compensation teams can:

- Compare posted salary trends with benchmark wages

- Identify pay gaps by region or skill set

- Detect when pay ranges are misaligned with market pressure

- Provide better guidance to hiring managers

Delivery matters here because compensation teams often use labor data inside spreadsheets, BI tools, or internal modeling environments—not raw databases.

How workforce planning and forecasting teams use structured labor market data

Workforce planning teams need visibility across time. They look for hiring surges, skill shortages, geographic shifts, and competitive behavior. They build scenarios around how talent supply might change.

They rely on delivered data that includes:

- Historical snapshots to track how roles evolve

- Normalized titles across employers

- Regionally standardized locations

- Skill-level insights that show what is rising or fading

- Occupation-level trends that connect back to long-term forecasts

Teams working in planning and forecasting cannot wait for ad hoc data pulls. They need scheduled, reliable updates with stable schemas to keep dashboards and models running.

Why the delivery layer determines whether the dataset becomes widely adopted

You can have the most sophisticated enrichment and warehouse logic in the world, but if the delivery is slow, unstable, or unclear, the dataset will sit unused.

AI-ready labor data becomes valuable only when it flows into:

- APIs powering real-time systems

- Bulk exports for modeling

- BI tools for exploration

- Custom integrations tailored to enterprise stacks

Delivery is where the dataset stops being a backend system and becomes a business asset.

Real-world examples: What AI-ready labor data unlocks for HR and workforce teams

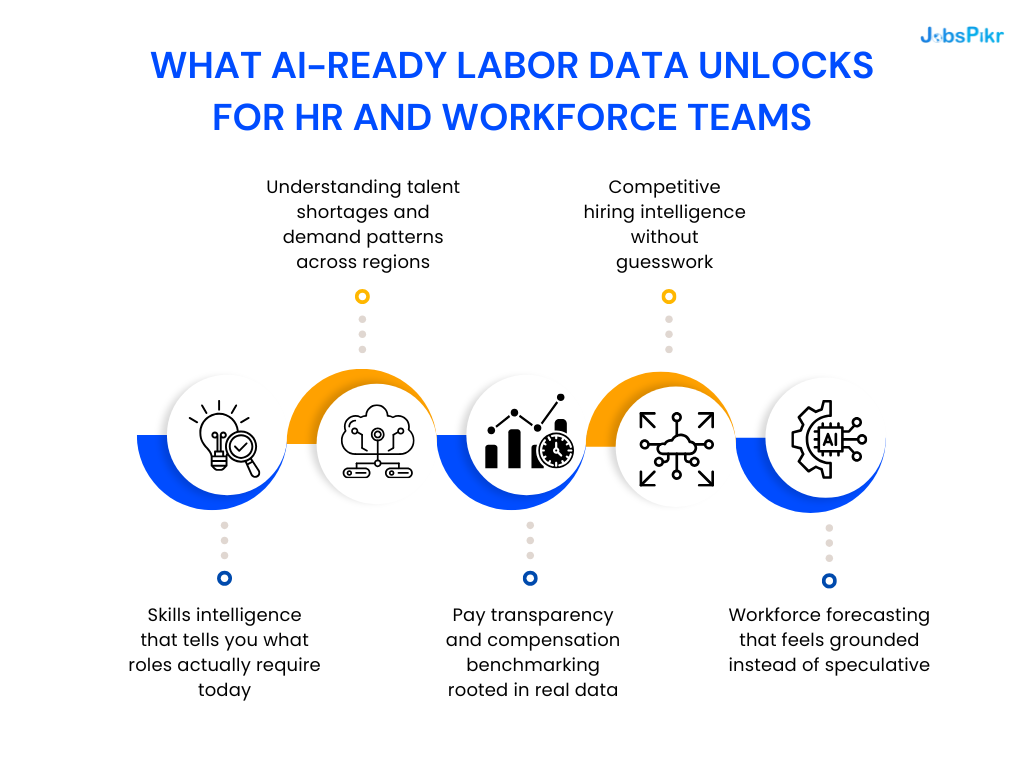

When labor data is cleaned, enriched, structured, and delivered stably, it stops being a collection of job postings and becomes something teams can use to make real decisions. Below are some practical examples of what organizations achieve once they move beyond raw data and work with an AI-ready labor market dataset.

These use cases are not theoretical. They reflect how HR tech platforms, compensation teams, and workforce planners operate when they finally have reliable labor market intelligence instead of inconsistent feeds.

Skills intelligence that tells you what roles actually require today

Skills shift faster than job titles. Even public bodies like the OECD have reported increasing “skills mismatch” across economies as technology evolves. When you rely on raw postings alone, these changes are buried in free text. An AI-ready dataset surfaces them clearly through normalized and enriched skill signals.

This unlocks questions HR teams care about:

- Which skills are becoming standard for specific roles?

- Which emerging skills are showing up in early pockets of the market?

- Where does our organization lag the talent market on modern requirements?

- How do skill demands differ by region or industry?

Skills intelligence only works when skills are extracted, standardized, and historically tracked.

Understanding talent shortages and demand patterns across regions

Labor shortages are not uniform. Some cities feel pressure long before others. Some industries experience spikes while others cool down. An AI-ready dataset makes these patterns measurable by tying postings to standardized locations and industry codes.

Workforce planners use this to:

- Identify metros with rising competition for specific roles

- Compare hiring velocity across locations

- Understand where relocation or remote hiring improves access to talent

- Plan workforce strategies aligned with regional skill availability

Without location normalization and historical snapshots, these insights are impossible to see.

Pay transparency and compensation benchmarking rooted in real data

Compensation teams benefit enormously from AI-ready labor data, especially when postings include structured salary ranges and can be compared against market benchmarks like BLS wage data.

This helps organizations:

- Detect when their salary ranges fall behind the market

- Understand geographic wage differences

- Spot compensation trends — rising, flattening, or declining

- Provide hiring managers with defensible, data-backed guidance

Even when exact salary data is missing, enriched compensation indicators create a practical foundation for modeling.

Competitive hiring intelligence without guesswork

Organizations often want to know how competitors behave in the talent market. Raw data cannot answer these questions consistently. AI-ready labor data, however, aligns employer identities, titles, skills, and locations — making competitive trends visible.

Teams can then ask:

- Which companies are aggressively hiring in a role family?

- How often do they update their postings?

- Which skills do they emphasize compared to us?

- Are their salary signals consistently higher or lower?

This is the kind of intelligence leadership teams expect but cannot get from messy datasets.

Workforce forecasting that feels grounded instead of speculative

Forecasting relies on history. Without historical snapshots, models treat labor data as a flat summary instead of a moving signal. AI-ready labor data gives forecasters the ability to see:

- How long roles stay open

- How job descriptions change over time

- When salary adjustments correlate with hiring success

- Which skills grow or decline across years

- How demand fluctuates by season, region, and industry

Forecasting becomes more credible because the model learns from real patterns, not incomplete slices.

Download the AI Readiness Index Report

Building a scalable data infrastructure blueprint for labor market data (Summary)

If you step back and look at the full pipeline, an AI-ready labor market dataset is not one thing. It is a sequence of decisions that stack neatly on top of each other. When each layer is built with intention, the dataset becomes stable, predictable, and genuinely useful across AI, analytics, and product workflows.

Start with ingestion, the moment data enters your system. If the inflow is unreliable, everything downstream inherits those problems. Then comes normalization, where messy titles, locations, employers, and skills are mapped into a single, consistent language. Without this step, your labor data will always behave like scattered fragments instead of a unified whole.

Enrichment adds the intelligence layer. It is where the data gains depth in the form of skills, compensation signals, employer metadata, and industry classifications.

The warehouse layer gives the dataset long-term structure. It keeps history organized, supports large-scale modeling, and shields downstream teams from schema surprises.

Scoring and labeling turn clean data into training-ready features. This is where models finally get the context they need to make accurate predictions.

And finally, governance and delivery ensure everything stays healthy and accessible. A dataset that breaks without warning or arrives in inconsistent formats never becomes part of an organization’s workflow.

When these pieces work together, you end up with a labor market dataset that does exactly what teams hope it will do: support forecasting, power recommendation systems, improve compensation decisions, track competitive hiring activity, and reveal skill trends before they show up in traditional reports.

A scalable blueprint is not about collecting more labor data. It is about building a system where the data you already collect becomes reliable enough for long-term AI work.

See how AI-ready labor data works

Get a closer look at how JobsPikr collects, enriches, and structures global labor data so your AI and analytics teams don’t have to build the pipeline from scratch.

FAQs:

1. What is labor data and why does it matter for AI models?

When we say, “labor data,” we are talking about everything that describes how work happens in the real world: job titles, descriptions, skills, locations, employers, salaries, and sometimes outcomes like time-to-fill or hiring volumes. If you look at job boards, ATS exports, or public statistics, you are already looking at labor data, just in different shapes.

For AI models, this data is the raw material. It is what powers salary benchmarking, job matching, skill gap analysis, workforce forecasts, and talent heatmaps. If the labor data is patchy or inconsistent, the models end up learning from noise. When the labor data is structured and reliable, those same models start giving answers that people can use to make decisions.

2. How is an AI-ready labor market dataset different from a collection of job postings?

A collection of job postings is just that: a pile of text and fields that were never designed to work together. Every employer writes roles differently. Some show salary ranges, some don’t. Skills are hidden in paragraphs. Locations are all over the place. You can run quick searches on it, but building a serious product or forecast on top of it is painful.

An AI-ready labor market dataset is what you get after a proper data architecture has done its job. Titles are normalized. Locations are mapped to consistent geographies. Skills are extracted and standardized. Employers are resolved to a single identity. Historical versions are kept instead of overwritten. You still have the original postings, but now they sit inside a structured labor market dataset that a model or analytics tool can plug into without a week of cleanup every time.

3. Why is normalization so important in labor market data software?

Normalization is the step that stops your systems from treating every minor variation as something completely new. In labor market data software, it means agreeing on how you represent job titles, regions, industries, skills, and employers, regardless of how they were written originally.

Take titles as an example. “Sr. SWE,” “Senior Software Engineer,” and “Backend Engineer III” might all point to essentially the same kind of role. If you do not normalize them, your labor data will show three separate trends where there is really one. The same thing happens with locations (“NYC,” “New York,” “New York City, NY”) and skills (“JS” vs “JavaScript”). Once normalized, those records start behaving like a coherent labor market dataset instead of a noisy export from a job board.

4. What makes a labor dataset truly AI-ready instead of just cleaned up?

A cleaned-up dataset usually means someone has removed obvious junk and fixed some formatting issues. That is helpful, but it is not enough if you want to build AI on top of it.

An AI-ready labor dataset has a few extra layers baked in:

it keeps historical snapshots instead of overwriting records, so models can see how things change over time; it uses labeled occupations, skills, industries, and seniority levels so models have clear categories to learn from; it includes enrichment like compensation signals and employer metadata; and it sits inside a governed data architecture where schemas are versioned and quality checks run automatically. On top of that, it is delivered in predictable ways (APIs, feeds, warehouse access) so engineers and product teams can rely on it like they would rely on any core system.

When all of that is in place, you are not just cleaning labor data. You are operating an AI-ready labor data pipeline.

5. How can companies use an AI-ready labor market dataset to improve planning and decision-making?

Most teams start with simple questions: where are we struggling to hire, what should we pay for a role, which skills are becoming non-negotiable? An AI-ready labor market dataset lets you answer those questions without assembling ten spreadsheets every time.

HR tech products use this kind of ai-ready labor data to power matching engines, salary insights, and skills analytics directly inside their platforms. Compensation teams lean on it to see how their ranges compare to external labor market data across cities and industries. Workforce and talent planning teams look at the same labor market dataset to spot demand spikes, emerging skills, and geographic pockets of talent, then adjust hiring plans or remote work policies accordingly.

The common thread is simple: when the underlying labor data is structured and stable, decisions stop being guesswork and start being grounded in something you can trace and explain.